The technology works. The language model delivers good results. The first version is ready. And then: nothing. Three weeks after go-live, half the employees aren't using the new system. Some report technical issues, others quietly ignore it, a few demonstratively continue working as before.

This is not an edge case. It's the norm. AI rollouts rarely fail because of the technology. They fail because companies underestimate the human side: the fear of change, the lack of guidance, the unclear communication.

Winning employees over for AI projects doesn't require a change management programme with workshops and glossy slides. It requires a staged model that combines tolerance at the start with clear expectations at the end, and concrete results in between that speak for themselves.

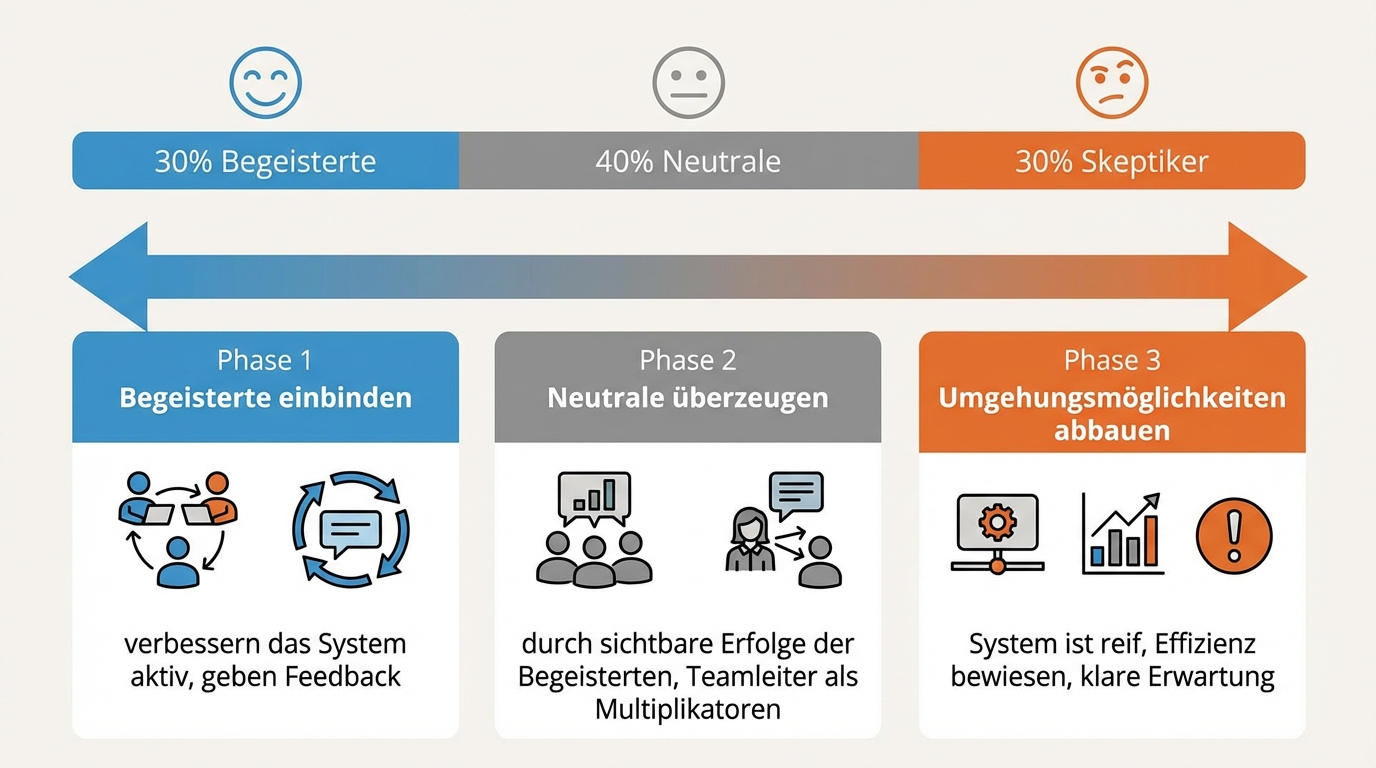

30 percent resistance is normal. Don't solve a problem that isn't one.

Roughly 30 percent of people in any organisation resist change by default. That is statistically normal and not within the project team's power to change. Trying to bring everyone along costs the time and energy needed for the other 70 percent.

That doesn't mean giving up on the 30 percent. It means addressing them at the right time. The proven approach is a staged model: first, identify and involve the enthusiasts who actively help improve the system. Then convince the neutral employees through the visible success of the enthusiasts, ideally through team leads as multipliers. And only when the system is mature and the early adopters are demonstrably more efficient, gradually remove the workarounds.

Experience shows that many of the initial resisters are convinced by the early adopters' success before enforcement ever becomes necessary. The productivity gains make the final step unavoidable for competitive reasons anyway.

Parallel operation removes fear, not productivity

The biggest lever for adoption is a psychological safety net: the old process remains available at all times. No employee has to worry about being unable to do their job if the new system doesn't work as expected.

That sounds like double the effort. It isn't. Parallel operation doesn't mean every task is processed twice. It means the old way stands ready as a fallback for cases the new system doesn't cover yet. As soon as the AI solution enters semi-productive use, it already creates a time saving per task. The old method isn't actively used; it simply exists as insurance.

This approach works because it drastically lowers the threshold for first use. Someone who knows they can always go back is more likely to try something new. And once they've tried it, they often realise themselves that the new way is less effort.

One process owner per topic reduces mental load to a manageable level

An AI system in daily operation requires hundreds of small decisions per week: Is this answer good enough? Is a source missing? Is the wording right? Does a rule need adjusting?

No single employee can handle this for the entire organisation. The solution: for each domain there is an internal process owner who has a direct line to the development team. This person doesn't need to be a technician. They need domain knowledge and the willingness to evaluate the system regularly.

In practice, this process owner invests roughly four hours a week at the start, then about two. They don't make the small decisions individually, but shape the configuration so the system gets most of them right automatically. The knowledge they contribute is documented in the system's configuration and thus available to others as well. In larger organisations, several process owners cover different areas and share insights regularly.

This is a deliberate investment on the client side. It is among the most valuable time a domain expert can spend, because it multiplies their own capability across the entire team.

Go-live does not equal adoption. Good tools don't sell themselves.

The hope that a good tool will gain traction on its own because it "takes so much pain out of people's work" doesn't hold up in practice. Three weeks after a successful go-live, adoption in one project was still far from 100 percent, even though there was no negative feedback.

Correcting this expectation matters: even a tool that measurably saves time needs active steering. Early user feedback tends to be technical (bug reports, interface issues), not substantive ("this tool helps me with my work"). Actual adoption is hard to read from the feedback in the first few weeks.

Two levers have proven effective: first, involve employees in building the system. Someone who has watched an AI solution go from idea to working system in three weeks develops a different understanding of what's possible. Second, a clear statement from leadership that the new way is the expected standard. Not as a threat, but as guidance: the manual path isn't forbidden, but it's no longer the intended path.

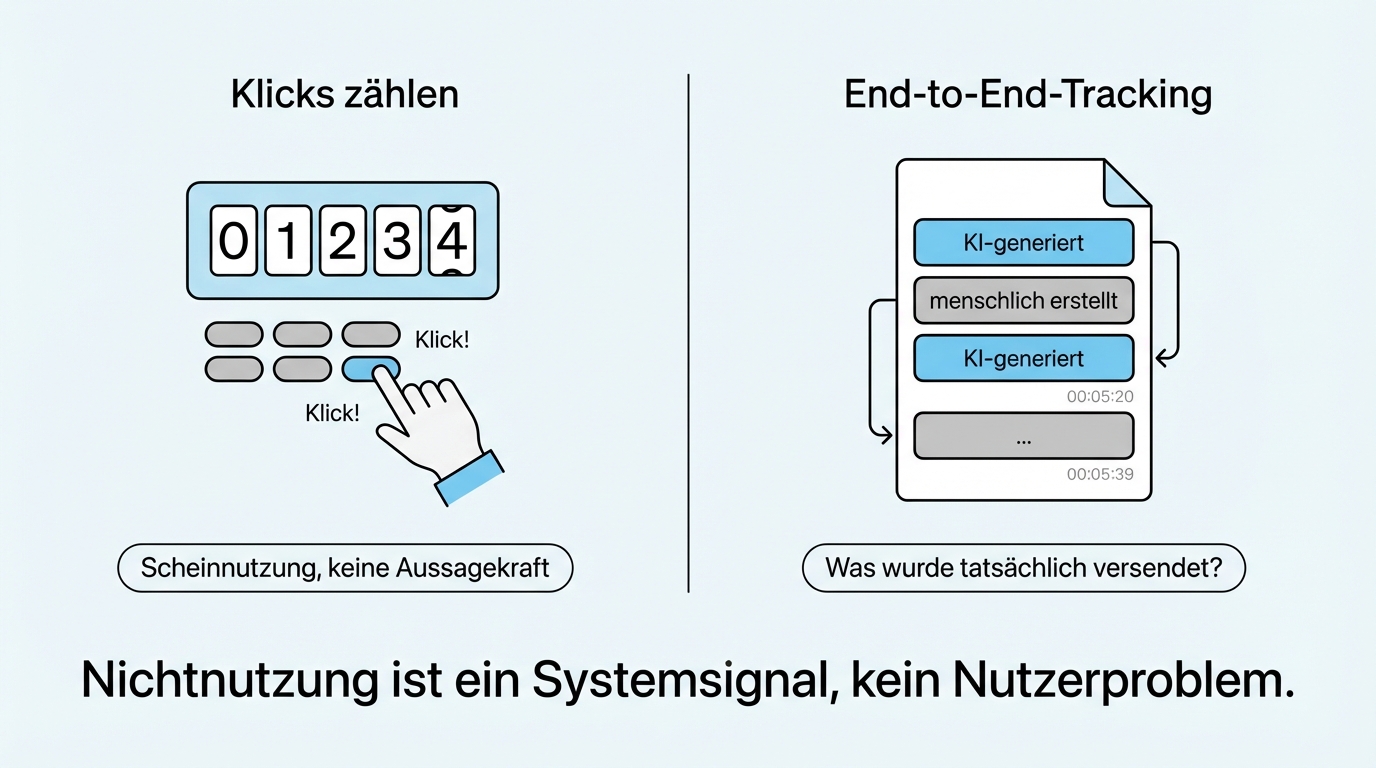

Measuring adoption means measuring results, not counting clicks

How do you tell whether employees are truly using an AI system and not just clicking "Generate" for show?

The answer lies in end-to-end tracking. Instead of counting how often someone opens the tool, you track all the way to the final output: what was actually sent or approved? Each item in the result is individually attributed: AI-generated or human-created. If someone generates daily and then discards everything, that becomes visible.

This tracking serves learning, not surveillance. When employees regularly discard AI results, that is not a user problem but a system signal: something is missing. Perhaps the sources are wrong, perhaps the tone doesn't match the company culture, perhaps the system needs more context. Non-use is an indicator of where the system needs to improve, not evidence that employees are refusing.

AI competence is the new Excel competence

Twenty years ago, someone in the organisation had to build Excel competence to solve a specific problem 50 or 100 percent better. Today it's AI competence. And the bigger lever isn't in the individual project, but in the organisation itself learning where AI creates value.

Someone with general AI problem-solving competence finds use cases on their own. They don't need to wait for a consultant to tell them where AI makes sense. The best AI projects don't originate at the IT manager's desk but in the daily work of the departments: a sales rep who realises their proposal research can be automated; a clerk who recognises that their document review follows a pattern.

Building this competence doesn't happen through classroom training, but through controlled handover of responsibility. In the first weeks, the development team makes the changes. Then domain users are gradually enabled: not to become 100 percent developers, but 20 percent. Enough to formulate better requirements and make simple adjustments independently.

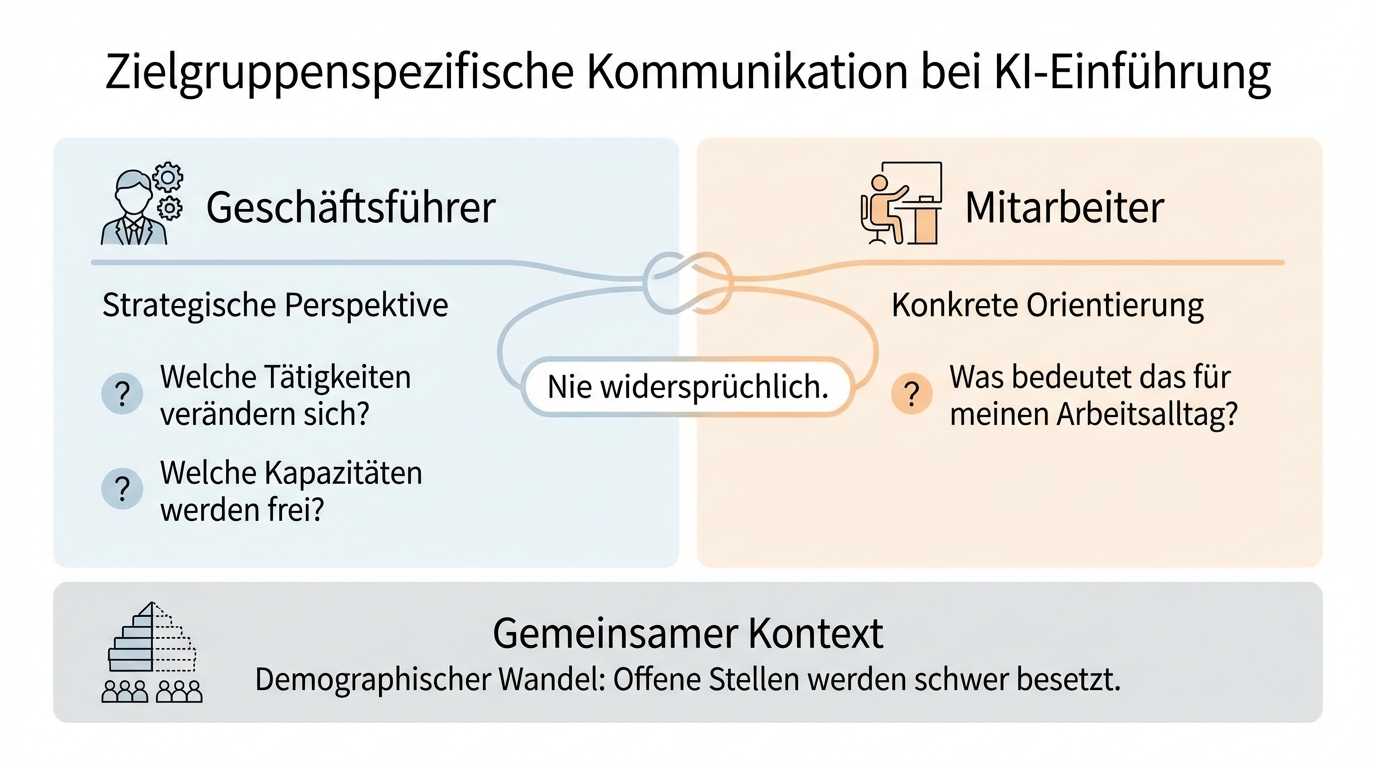

Honest communication instead of false promises

The most uncomfortable truth about AI rollouts: AI does take work away. Not from everyone, and not immediately, but the comfortable, repetitive tasks disappear over time. Concealing this creates more resistance than naming it.

Communication must be tailored to the audience, but never dishonest. The managing director needs the strategic perspective: which tasks change, which capacity is freed? Employees need orientation: what does this mean concretely for my daily work? The answer differs by role, but it must never be contradictory.

The honest truth behind it: demographic change means positions are already hard to fill. Freed-up capacity doesn't translate into layoffs but into previously neglected tasks. The best domain experts focus on the work that requires experience and judgement, and AI makes them more powerful in doing so.

The workforce question remains a leadership task, not a technology question. For competitive reasons, the change is unavoidable. But the way it is communicated and supported determines whether employees experience it as a threat or as an opportunity.

What a successful rollout requires

Three levers determine success:

Executive backing from day one. Without support from the top, an AI project cannot withstand internal resistance politically. The easier path ("automation through the existing system") will always be floated as an alternative. Only measurable results and clear backing keep things on course.

One process owner per domain. An internal owner per area who evaluates and improves the system. Four hours at the start, then two hours a week. Not a technician, but a domain expert willing to embrace something new.

Measurable results instead of promises. Without communicable numbers, the status quo always wins. End-to-end tracking shows the value AI actually delivers. Not "the tool is being used," but "these results were produced faster and better with AI support."

The next step

We start with a conversation to clarify together: where are you in your AI rollout? What resistance are you seeing? Which processes should change? Afterwards you'll be able to assess whether our approach fits your situation.

No strategy project, no consulting fee for the initial conversation. Just an honest assessment of how you can win your team over for the change ahead.