Every company has that one person. The one who has been solving the complicated cases for 25 years. The one who knows why product X doesn't work with material Y, even though it should on paper. The one who pulls the right solution from memory for every custom configuration.

What happens when that person retires? The honest answer in most companies: the knowledge leaves with them. Because it's written down nowhere. It lives in experience, intuition, and a thousand small decisions that were never documented.

AI changes that. Not through a documentation project that nobody follows through on, but through an iterative process where knowledge is extracted during daily operations. Step by step, correction by correction, until the system solves the cases that only the expert used to handle.

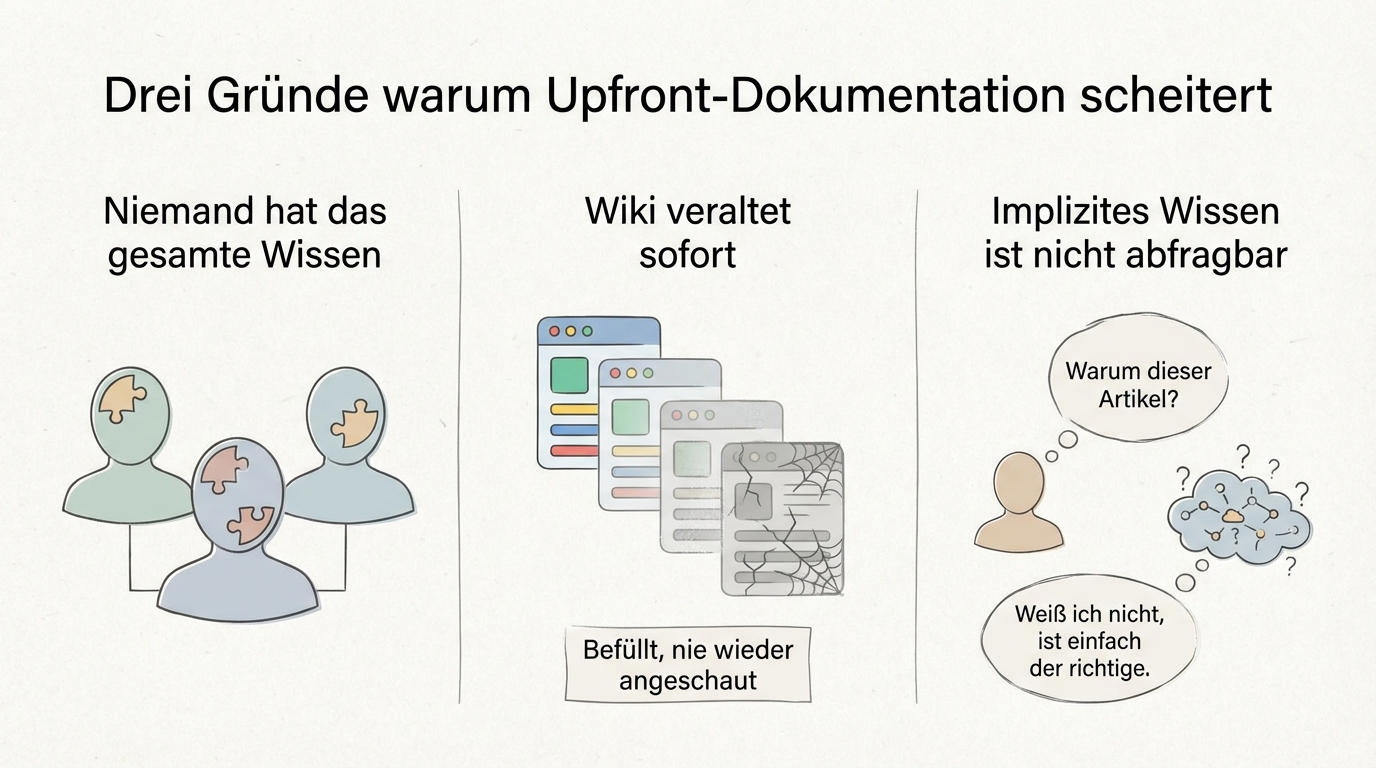

Why upfront documentation fails

The obvious idea: "Let's write the knowledge down before people leave." In practice, this almost always fails.

First: nobody has the complete knowledge. At one complex manufacturing company, we found that no single person could describe the entire proposal creation process. The modular logic was too complex, the edge cases too numerous, the implicit rules too deeply buried. The attempt to write everything down upfront would have failed simply because nobody knew what "everything" actually was.

Second: documentation that nobody actively uses becomes outdated immediately. Wikis that are filled with great effort and then never looked at again exist in every company. The problem isn't the will -- it's the missing connection to daily work.

Third: implicit knowledge can't be queried. When an expert has been writing proposals for 20 years, they make dozens of unconscious decisions per case. "Why did you pick that article?" "I don't know, it's just the right one." This knowledge only becomes visible when a concrete situation demands it.

How AI extracts knowledge during daily operations

The approach is the opposite of upfront documentation. Instead of writing everything down in advance, the AI starts with what already exists and learns iteratively.

Starting point: existing data. Every company has historical data: past proposals, answered enquiries, client communication. These documents aren't a perfect knowledge base, but they already contain a large share of the decision logic. One company started with ten answered tenders and derived the first rules for automated proposal processing from them.

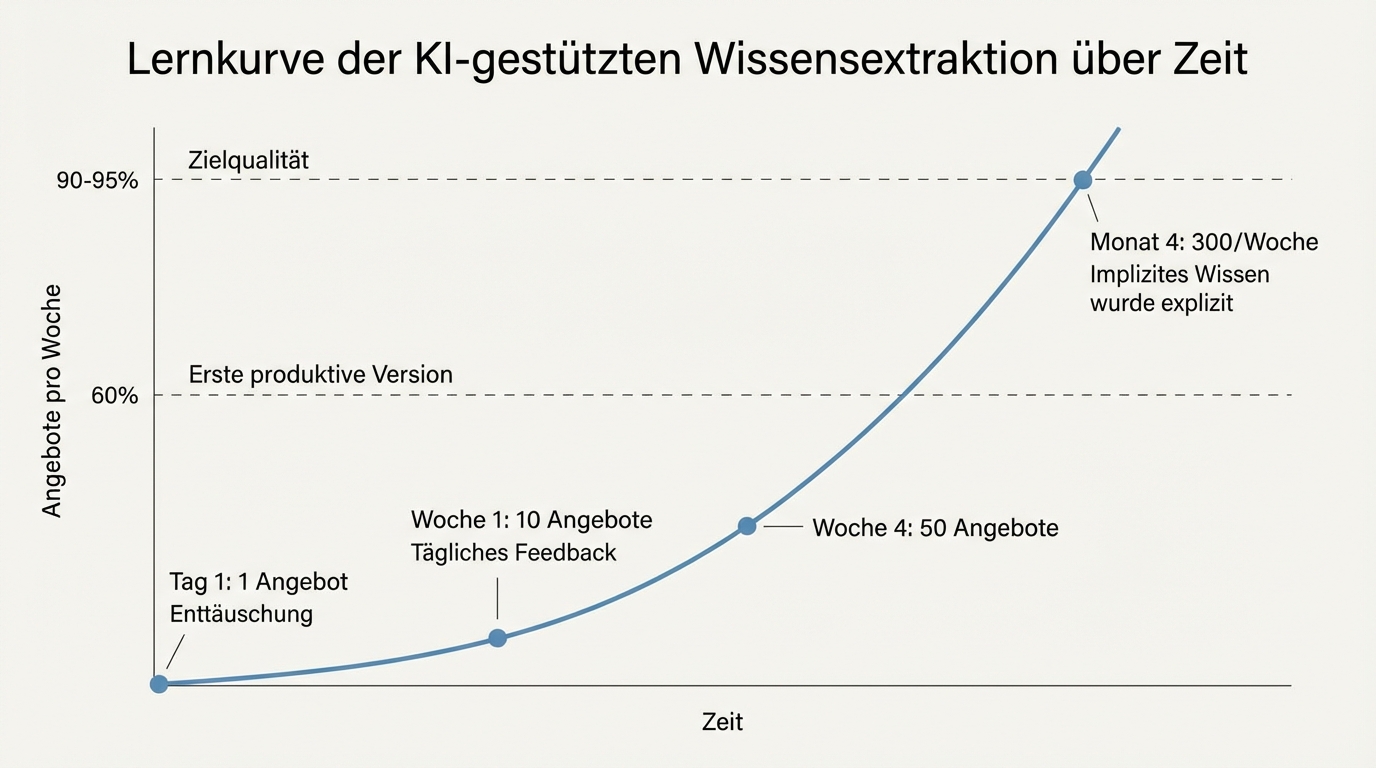

First version: 60 percent quality. The AI creates an initial draft that works for typical cases but still fails on edge cases. That's not a bug -- it's the plan. This first version makes visible where knowledge is missing that nobody could articulate before.

Feedback loop: the domain expert corrects. Now the decisive role comes into play: the domain expert reviews the results and corrects. "That's wrong, because with this material the temperature resistance isn't sufficient." Or: "The product fits, but only in combination with component Y." Every correction is incorporated into the AI's instructions. Mistakes only happen once.

Scaling: from 1 to 300 per week. One company created one proposal on the first day and was disappointed. Two on the second day. Four on the third. Because the team sat down daily and made the implicit knowledge from their own heads explicit. After a few months, the system was processing 300 proposals per week.

This process is comparable to onboarding a new employee. You sit alongside them, explain, correct, and after a while they work independently. The difference: the AI forgets nothing, and every correction applies to all future cases, not just the next one.

What the domain expert needs to invest

Two to four hours per week. That's the realistic time commitment for the domain expert who brings knowledge into the system. More corrections at the start, later only occasional adjustments for edge cases.

This investment sounds small, and it is. But it's decisive. Without a person who truly understands the process and is willing to give feedback, the entire approach doesn't work. The good news: it doesn't have to be a technical person. The entire system works in natural language. "When the customer orders custom configuration X, component Y must be included" is an instruction the domain expert can formulate without writing a single line of code.

The question "Can we afford to pull our best expert away for four hours a week?" turns around when you do the maths honestly: those four hours are the highest-return time this expert spends. Because for the first time, the knowledge is being passed on in a way that truly scales. Not to one person who might quit tomorrow, but to a system that's available to everyone.

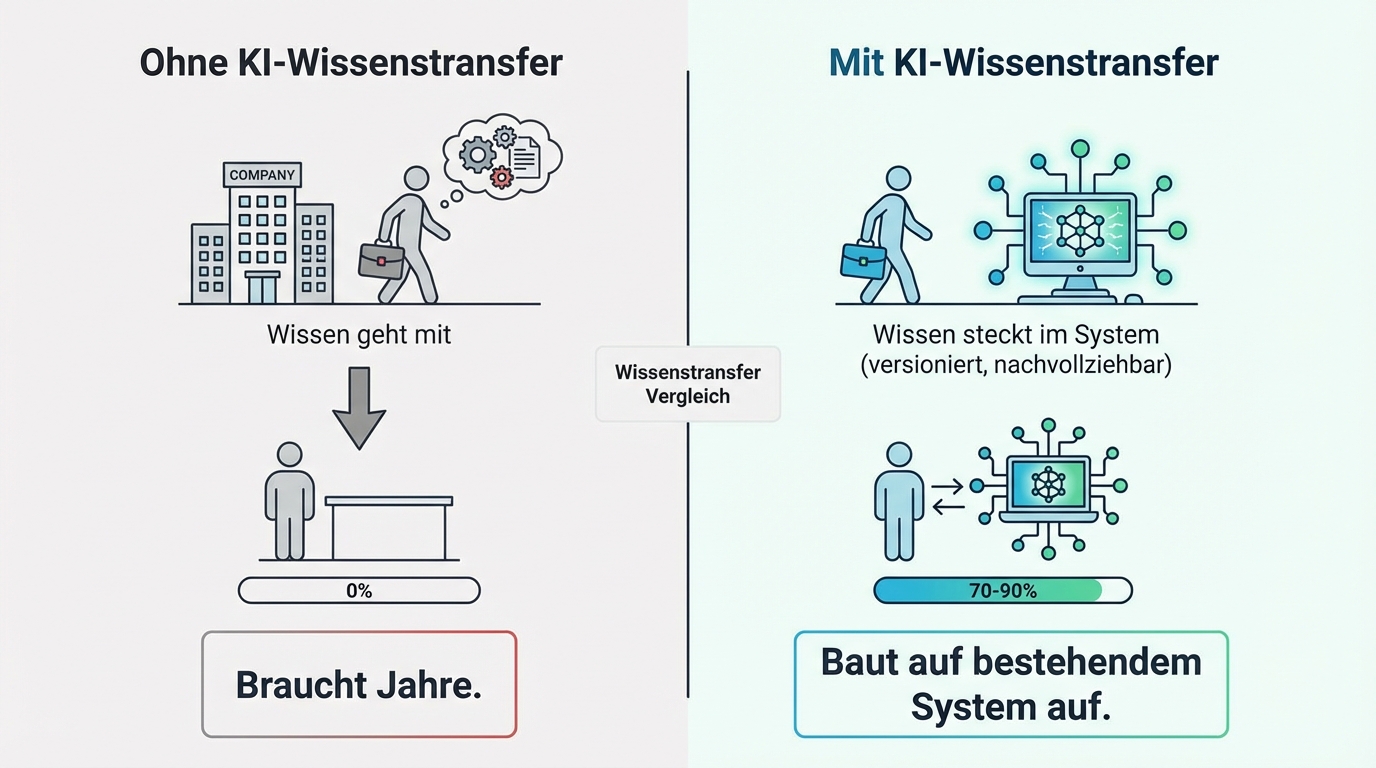

What happens when the expert leaves anyway

The decisive question isn't whether you can complete the knowledge transfer in time. It's whether you are in a better position than before.

Without AI-assisted knowledge transfer: the knowledge leaves with the person. There is no documentation, no transferable knowledge base. The new employee starts from zero and needs years to reach the same level.

With AI-assisted knowledge transfer: the knowledge is explicitly captured in the system's instructions. It's written in natural language, versioned, traceable. When the expert leaves, the knowledge maintenance role does need to be filled again, but the starting position is different: instead of starting from zero, the next person builds on a system that already handles 70, 80, or 90 percent of cases correctly.

In larger projects, there isn't just one person anyway. Two to three specialists maintain different areas of knowledge. A systematic onboarding plan for new team members exists as a by-product, because the instructions themselves are the best documentation of the process.

Knowledge belongs in work tools, not in a wiki

A wiki that needs to be maintained won't be maintained. Experience shows this in every company. Instead, knowledge belongs where it is used: directly in the systems people work with.

When an employee processes a request and the system automatically suggests the right product, that's applied knowledge. When they correct the suggestion and the correction immediately applies to all future cases, that's a living knowledge transfer.

This knowledge is versioned and traceable. You can see at any time when a rule was added and why. You can undo changes if they turn out to be wrong. And you can generate readable documentation from the instructions at any time, if someone needs an overview.

Progress isn't measured by an abstract percentage ("We've digitised 40 percent of our knowledge"), but by a practical question: how often does the domain expert still need to correct? Every correction is a signal for missing knowledge. When correction frequency drops over the months, the digitised knowledge is growing.

How knowledge stays current

Knowledge becomes outdated. Regulations change, materials get discontinued, processes get restructured. How does the system recognise that a rule no longer applies?

The primary mechanism is the same as before AI: specialists keep up with their field, read trade publications, attend training. When something changes, they correct in their validation role. The difference: the correction takes effect immediately on all future cases, not just the next one.

Beyond that, the updating process can also be automated. In one client project, the system automatically sets expiry dates for sources: six months for fast-moving topics, five years for foundational knowledge. Responsible parties are notified via reports when sources need review. This doesn't replace human curation, but it ensures nothing is forgotten.

The next step

Identify the process in your company where the most expert knowledge lives in the heads of individual people. Bring ten typical cases (enquiries, proposals, transactions) with their corresponding results. In a joint session, we'll give you an assessment of how much of the knowledge can be extracted, what effort is realistic, and how quickly you can expect first results.