Specialist publishers, trade associations, and academies sit on a treasure: years of curated expert knowledge in the form of webinars, expert opinions, handbooks, and journal articles. This knowledge is valuable but hard to access. A tax adviser with a specific question about e-bike taxation has to work through hours of webinar material. A fire safety engineer who needs the regulations for an atrium in Hamburg searches through dozens of guidelines.

An AI expert chatbot makes this domain knowledge interactively accessible: ask a technical question, receive a precise answer, trace every statement back to the original source. The pattern works across domains and has been running in production across multiple fields for over a year, handling tens of thousands of queries per month.

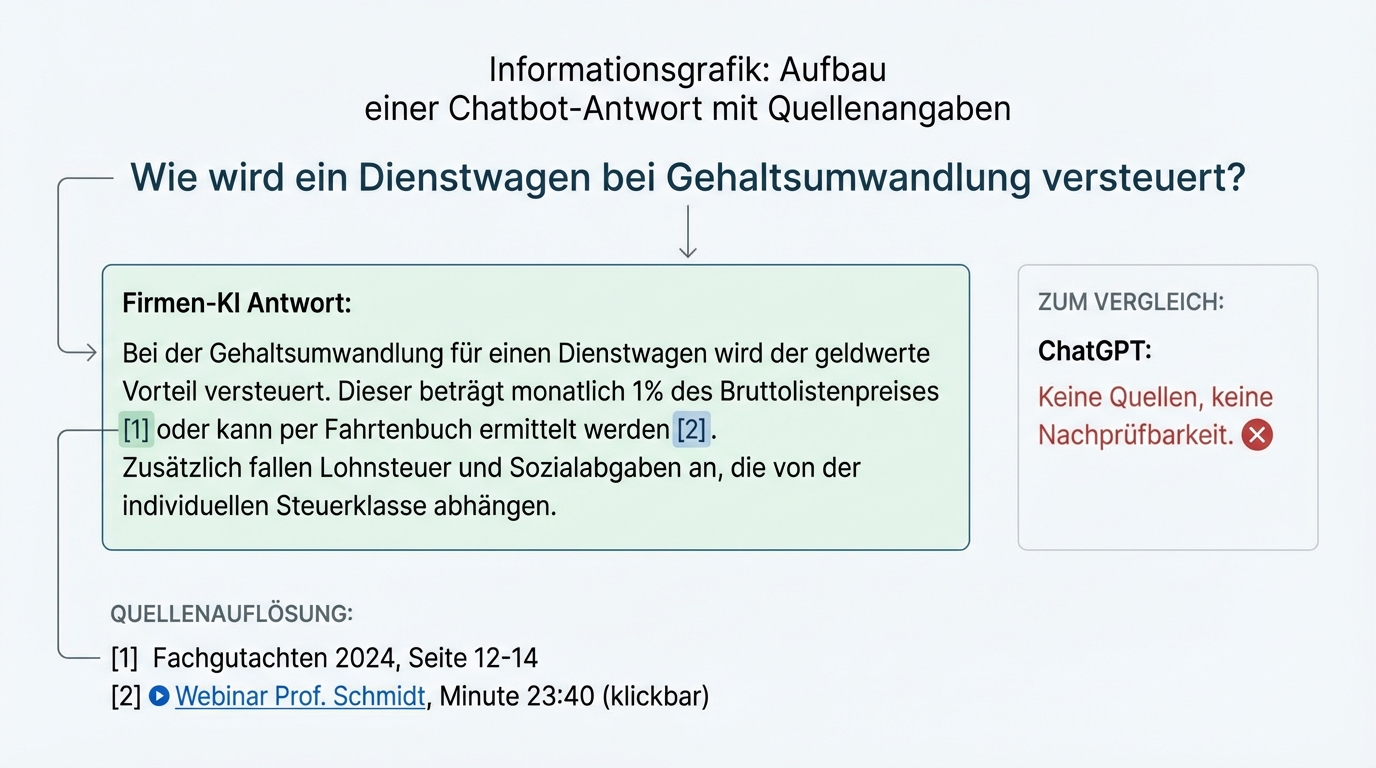

Source citations are not a feature -- they're the baseline

The most important difference between a domain chatbot and ChatGPT is not the answer quality. It's the traceability.

Every answer from a domain chatbot must be traceable to the original source. When a tax adviser receives an answer about VAT, they click the source citation and see the underlying expert opinion. When a participant asks a question about a four-hour webinar, they jump with a click to the exact minute in the video where the answer was given.

Without this transparency, a domain chatbot is worthless. No expert trusts a black box. Professionals need the ability to verify the answer against the original source. This is not an add-on feature -- it's the central trust mechanism, confirmed across multiple domains over 18 months: tax law, occupational safety, fire safety, professional consulting.

Source citations also solve a second problem: they turn the chatbot into a gateway to the entire content library. A user who asks a question and receives a relevant excerpt from a webinar is more likely to watch that webinar in full than someone who picks it blindly from a catalogue.

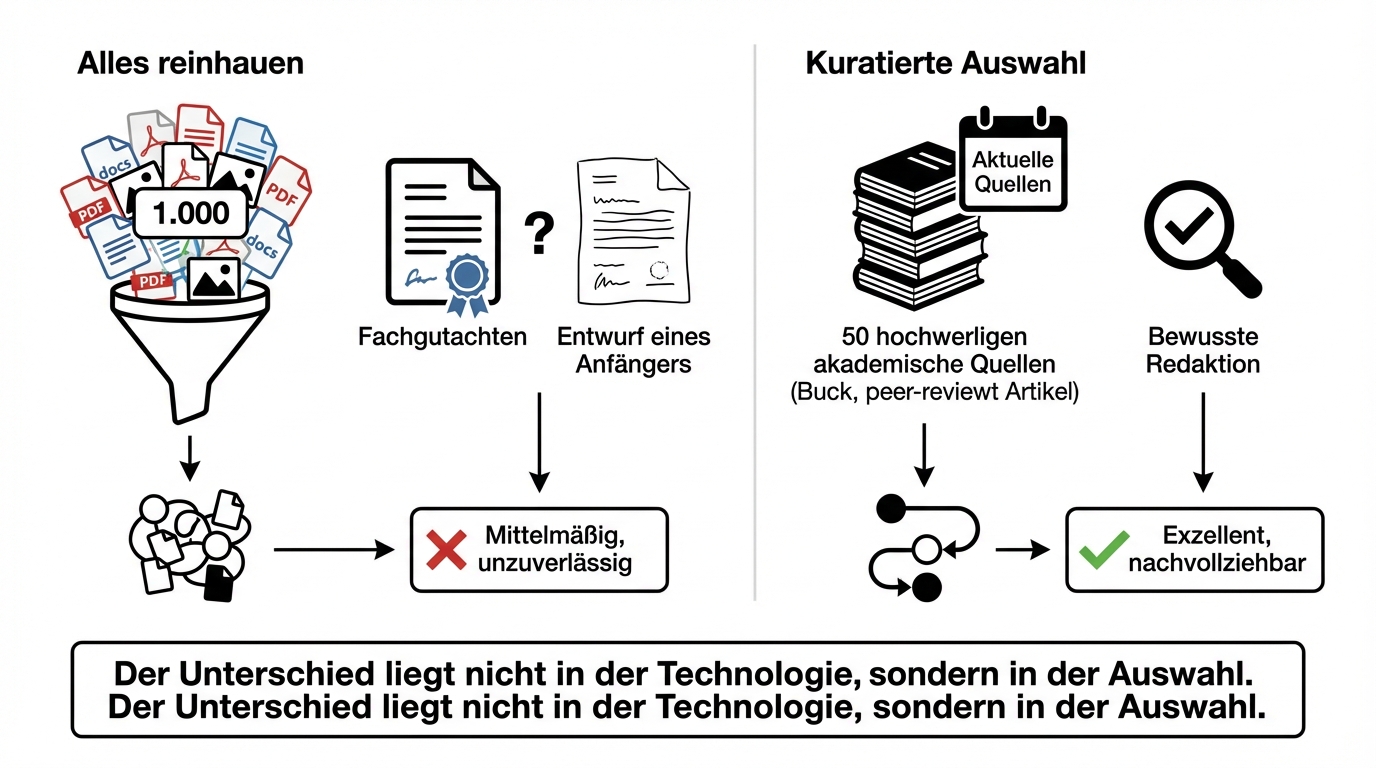

Curation beats volume: why "throw everything in" fails

The most common mistake when building a domain chatbot: load all available documents into a database and hope the AI finds the right passages.

That doesn't work. When the same knowledge base contains a carefully researched expert opinion alongside a superficial draft from a junior colleague, both are treated as equal by the AI. Results become mediocre because the quality of the knowledge base determines the quality of the answers.

The difference between a mediocre and an excellent chatbot isn't the technology. It's the deliberate decision about which documents go into the knowledge base and which don't. What's current? What has the necessary depth? What result do we expect for a given question?

This curation isn't a one-time task. During ongoing operations, an editor reviews weekly automated reports showing which questions couldn't be answered well due to missing sources. The knowledge base doesn't grow unchecked -- it's actively maintained. That's a standard business model that specialist publishers have known for decades, just applied to a new medium.

Real expert conversations are the best knowledge base

The best training data for an expert chatbot aren't textbooks or handbooks. They're real, recorded advisory conversations.

A coaching chatbot that has been in production for over a year is based on more than 9,000 minutes of video material and several professional books. The combination of real conversations and structured material creates a knowledge base that doesn't just deliver facts but also reflects the expert's conversational style and reasoning.

Recorded advisory sessions plus the existing conversation guide typically cover 80 to 90 percent of the necessary knowledge base. The remainder is filled through targeted feedback during live operations.

Videos are processed via speech recognition into transcripts with sentence-level timestamps. PDFs pass through recognition that correctly captures tables and complex layouts. Different media types require different processing pipelines, but the result is a unified, searchable knowledge base.

The chatbot must understand the domain, not just find documents

Loading documents into a database and adding a search layer on top is sufficient for simple use cases. For real domain questions, it's not.

A chatbot for fire safety law must recognise whether the questioner is planning in Hamburg or Munich, whether it's a high-rise or a timber building, whether the use is commercial or residential. Depending on the answers, entirely different regulations apply. This domain-specific logic doesn't live in the documents themselves -- it's built into the chatbot as configuration: glossaries, specialist abbreviations, metadata constraints that prioritise different sources based on the user's context.

This is also why general-purpose models like ChatGPT cannot take over this task, even if they had access to the same sources. The domain-specific logic, the curated benchmark dataset, and the experience from tens of thousands of real queries together form a competitive advantage that a general language model cannot replicate. Specialist publishers are also unlikely to voluntarily feed their sources into general-purpose models, as that would lead to significantly lower revenues.

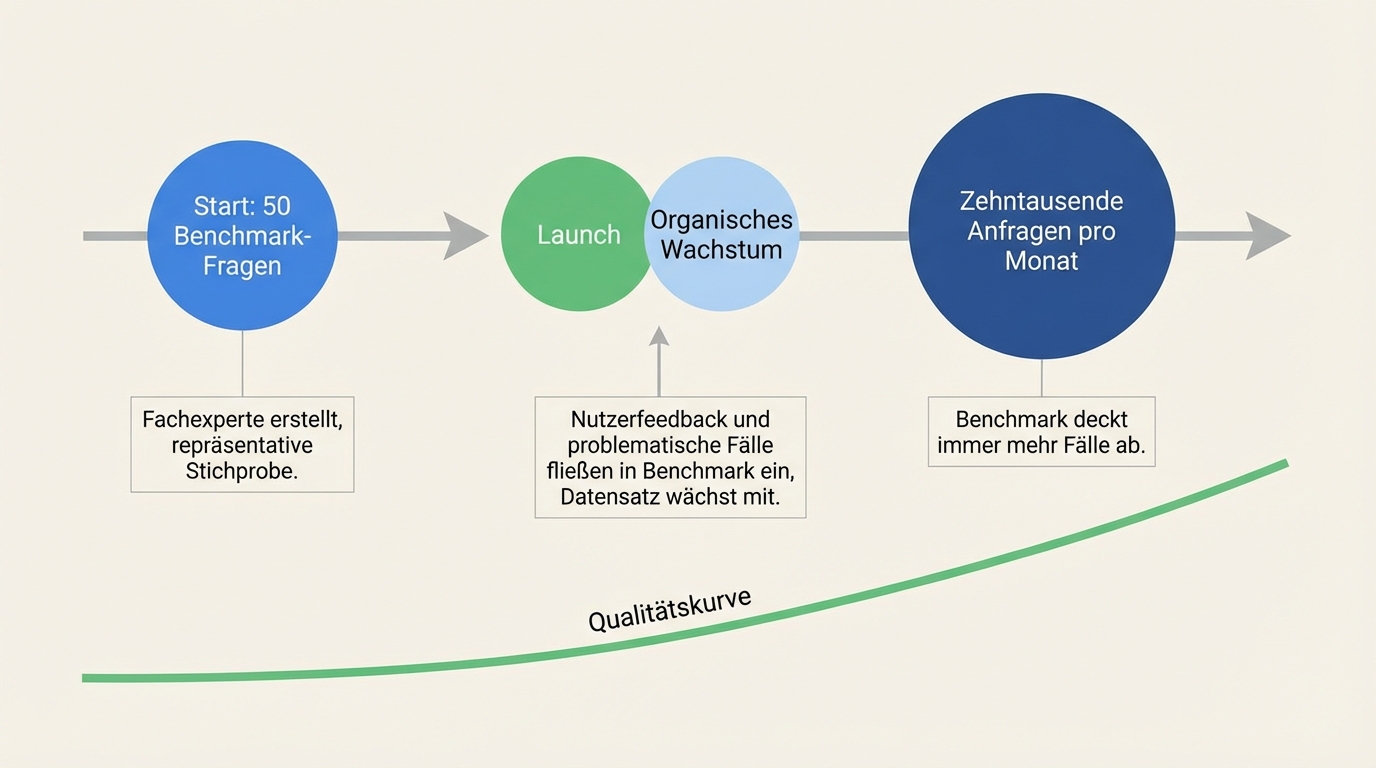

Quality assurance: from 50 benchmark questions to tens of thousands of queries per month

Quality assurance for a domain chatbot starts before launch and grows organically afterwards.

Before launch, a domain expert creates roughly 50 benchmark questions with expected answers and source references. These questions are a representative sample across different knowledge areas. They make it possible to quickly assess system quality and bring the core functionality to a good level within a few weeks.

During live operations, two mechanisms kick in. First, users give feedback on particularly good or bad answers. Second, problematic queries (poor traces) are added to the benchmark dataset. The system is adjusted, and the dataset grows organically with real usage data. This way, quality assurance covers more and more cases without anyone having to manually write thousands of test questions.

Usage statistics round out the picture: how many users are engaging? How many questions are being asked? Which topic areas are most in demand? These numbers are essential for demonstrating the chatbot's value to the organisation.

The agent operator: a new role between technology and the business

Running a domain chatbot requires a role that didn't exist before: the agent operator. This is neither a developer nor a manager, but a domain expert who regularly tests the system, evaluates the results, and provides feedback in natural language.

What makes this role distinctive: it requires no technical skills. The system is steered through natural language. The agent operator says: "The answer was too short, the source citation is missing, this should have also referenced the 2023 expert opinion." The development team implements it.

In practice, the agent operator invests about four hours per week at the start, then about two. They can delegate the task to editors who review weekly reports and maintain the knowledge base. The knowledge doesn't live only in one person's head -- it's documented in the system's configurations and prompts. In larger organisations, several people are trained for this role, with regular exchange.

From Q&A chatbot to gateway for the entire content library

A domain chatbot starts as a tool for answering technical questions. In practice, it quickly evolves beyond that.

The core functionality -- answering domain questions with source-backed responses -- is typically in place within a few weeks. On this foundation, additional features can be built: document generation (a technical question becomes an email to the CEO or a brief expert opinion), access to gated content (the chatbot manages which videos a user may view), or integration into existing platforms and member areas.

A trade association, for example, uses the chatbot as an entry point for its members: free questions as a trial offer, then a subscription model for unlimited access. Non-members can test the chatbot before deciding on a membership. The chatbot thus becomes both a customer service tool and an acquisition instrument.

What the introduction requires

Three prerequisites determine success:

Curated domain sources instead of data overload. Not every document belongs in the knowledge base. Ten well-selected expert opinions deliver better results than a thousand unsorted files. Deliberate selection is the first and most important step.

A domain expert who evaluates the system. The agent operator doesn't need technical skills, but deep domain knowledge. They evaluate answers, flag gaps, and steer ongoing development. Four hours per week at first, then two.

Benchmark questions as a quality anchor. 50 sample questions with expected answers and source references form the foundation of quality assurance. The effort to create them is modest; the value for quickly assessing system quality is substantial.

The next step

We start with a conversation to jointly clarify: which domain knowledge do you want to make accessible? In what form does it exist? Who are your users, and what questions do they ask? Afterwards, you can assess whether an AI expert chatbot is the right path for your situation.

No strategy project, no consulting fee for the first conversation. Just an honest assessment of what's possible with your domain knowledge.