The most common concern we hear from executives before an AI project starts: "Won't this make us dependent on you?" The concern is legitimate. And the honest answer is: if the service provider isn't actively working toward making themselves dispensable, then yes.

Our goal in every project is therefore a concrete end date for external support. Zero hours of ongoing assistance. That's the target. Not realistic from day one in every project, but unmistakable as a direction.

Building capability beats buying point solutions

The investment with the greatest leverage is not a single AI solution. It's enabling your own people.

A parallel from an earlier technology revolution makes the point: when spreadsheets first appeared, the most successful companies weren't the ones that bought individual calculation tools. They were the ones that trained their people to use spreadsheets. A trained employee could then solve dozens of problems. A purchased tool solved exactly one.

It's the same with AI. When a company trains 10 to 20 employees in general AI problem-solving skills, dozens of improvements emerge that no external partner could have identified beforehand. Because only your own people know where the real pain points are.

This doesn't mean that individual AI solutions are unnecessary. For complex, high-volume processes, tailored systems are needed. But the foundational capability of the team is the bedrock on which everything else is built.

The domain expert steers the AI, not the IT specialist

The central role in building internal AI capability has a name: the domain expert who evaluates the AI's output and improves the system. This person comes from the business unit, not from IT. They don't need programming skills or prior AI knowledge. What they need is deep domain expertise.

Why? Because the bottleneck in AI systems is not the technology but the expert judgement. When a system generates an answer, only the domain expert can judge whether it's correct. The AI partner doesn't have this domain knowledge. They understand the technology, but not the nuances of the industry, the internal processes, the customer expectations.

In practice it looks like this: at one company, the head of sales took on this role for four weeks. He reviewed the first AI outputs, gave feedback, and co-developed the adjustment logic. After that, the team could carry on independently. The time invested: about four hours per week at the start, around two hours after that. Not a full-time position, but not a side task either. A deliberate investment by the company in its own capability.

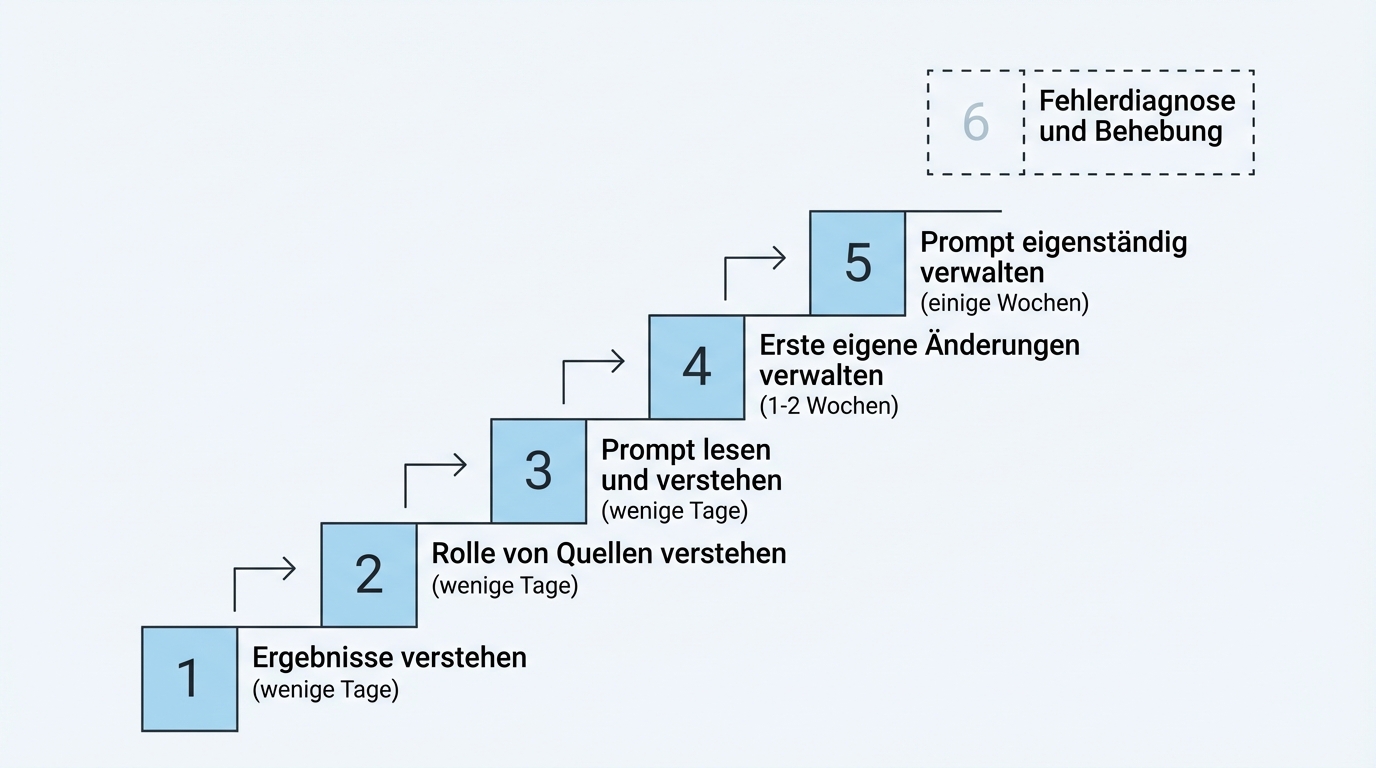

Five stages to self-sufficiency

Capability building follows a clear path. Not everyone needs to go through all stages, but each stage has concrete value.

Stage 1: Understand how the system generates results. The basic orientation. What goes in, what comes out, why does a result look the way it does?

Stage 2: Understand the role of sources and context. When a result is poor, is it the data, the configuration, or the model? Making this distinction is the first step toward diagnosing problems.

Stage 3: Understand the prompt. The prompt is the instruction set that governs the system's behaviour. Anyone who can read and understand the prompt knows why the system responds the way it does.

Stage 4: Make first changes to the prompt. Small adjustments that improve results. Adding a technical term, supplementing a formatting rule, defining an exception.

Stage 5: Manage and evolve the prompt independently. The target level. The domain expert can adapt the system to changing requirements on their own, without external help.

Beyond that, there's a sixth, advanced capability: when a result is poor, being able to analyse whether the cause is the context, the prompt, or a technical fault, and fixing it independently. That's the level at which a company is fully self-sufficient in day-to-day operations.

Experience across multiple projects shows: stages 1 to 3 are reachable within a few days. Stage 4 takes one to two weeks of hands-on practice. Stage 5 develops over several weeks through regular use. Interactive training sessions where people work together on real problems accelerate the process significantly.

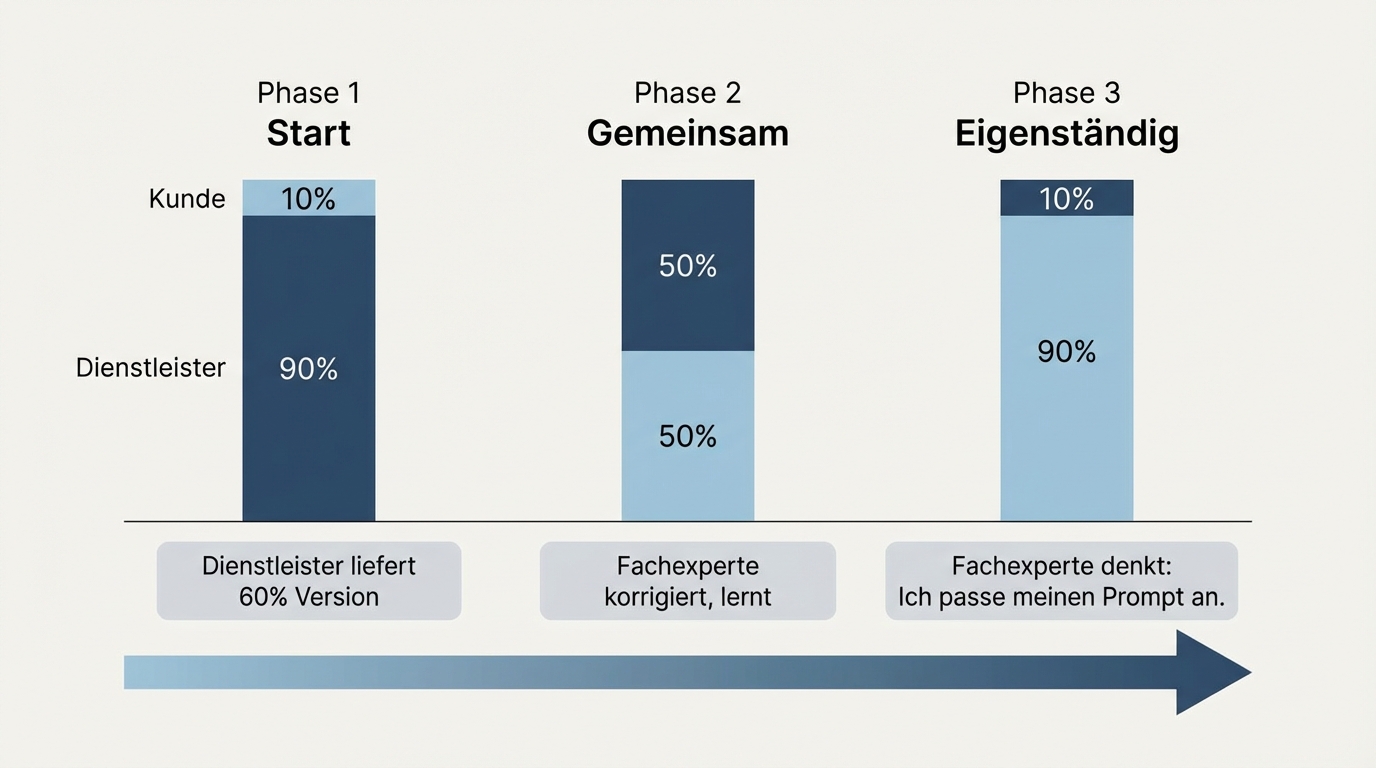

60 percent as a starting point, then improve together

The enablement model that has proven itself in practice follows a clear pattern.

The partner delivers the first version of the system, which typically reaches about 60 percent of the desired quality. Not a perfect system, but a functioning starting point that covers the most important cases.

Then partner and domain expert work together on improvements. The domain expert sees what's missing, corrects, gives feedback. The partner shows how corrections feed into the adjustment logic. This is the phase where knowledge transfer actually happens: not through training documents, but through working together on the concrete problem.

After that, the partner steps back. To roughly 10 percent support for the truly difficult cases. The goal: the domain expert thinks "I'll adjust my prompt" rather than "I'll call the partner." That's the moment internal capability is in place.

Putting the prompt in the domain expert's hands works better than any other form of knowledge transfer. The domain expert is the perfect feedback loop because they know the domain, recognise the right answer, and can immediately judge the improvement.

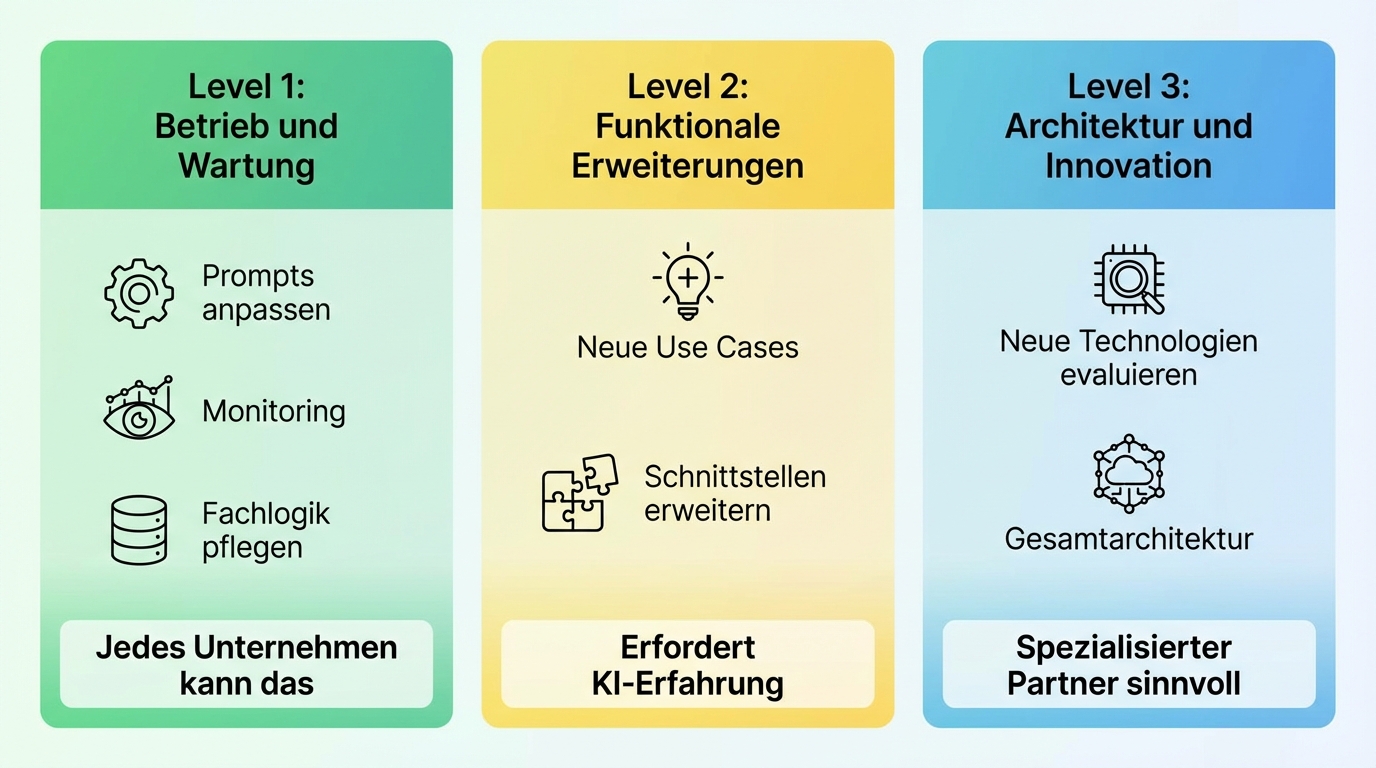

Three capability levels for the independence question

What can companies realistically handle themselves, and where does external need remain? The honest answer distinguishes three levels.

Level 1: Operations and simple maintenance. Keeping the existing system running, adjusting business logic through prompts, identifying and resolving minor issues. This is the level every company can realistically build. The IT department handles infrastructure (monitoring, security updates), the domain expert handles content maintenance. This requires an operations handbook and a few handover sessions.

Level 2: Functional extensions. Adding new use cases, expanding interfaces, adapting the system to changed business processes. This requires someone with experience building AI systems. For most mid-market companies that means: either hire such a person or continue to buy in expertise on a project basis.

Level 3: Architecture and innovation. Evaluating new technologies, evolving the overall architecture, unlocking fundamentally new capabilities. This is the level where a specialised partner is structurally better positioned, because they work full-time in the technology and pool experience across many projects.

The honest assessment: full independence across all three levels is neither realistic nor economically sensible for most mid-market companies. Level 1 is the minimum and achievable. Level 2 is the goal for companies that see AI as a core competency. Level 3 remains a partnership for most. That's not a disadvantage but a deliberate division of labour: just as companies retain accountants, lawyers, or auditors long-term -- not because they couldn't build the competency, but because it's more economically efficient.

Preserving knowledge: the prompt is the best documentation

A common objection: what happens when the domain expert leaves the company? Does all the AI knowledge disappear?

Compared to the pre-AI era, the situation is better, not worse. Without AI, the knowledge lived only in the head of the most competent person. Nobody knew exactly how they phrased the profile texts, why certain wording was chosen, or which rules they applied implicitly. That knowledge was lost with every job change.

Now it's explicit in the prompt: which rules apply, which exceptions exist, which formulations are preferred, which are avoided. What's well-documented for the AI is also understandable for a successor. The prompt is, almost as a side effect, the best process documentation the company has ever had.

In larger organisations we train teams of several people and create a documented guide. Single points of failure are not avoided by hope but by structure.

Meta-capability over certificates

One final, important distinction: building AI capability does not mean training every employee on a specific tool. A training certificate for a particular AI system can be obsolete in six months, because the technology moves faster than any curriculum.

What must endure is the meta-skill: the ability to adapt to new tools, assess their strengths and limitations, and deploy them productively. This is less a matter of technical knowledge than of mindset: curiosity, willingness to experiment, and the ability to learn from mistakes.

Three levels of AI enablement can run in parallel. A broad foundational training that reaches all employees: how does AI work, what can it do, what can't it do? Then a deeper enablement for the domain experts who manage specific systems: prompt management, feedback loops, quality assurance. And finally, transformation support for companies that want to fundamentally redesign their processes.

Not every company needs all three levels. But every company needs at least the first.

The next step

Identify the domain expert in your company who has the deepest knowledge of the process you want to automate. Bring that person into a conversation with us. We'll show how the capability building works in practice, which stages your team will go through, and what timeline for self-sufficiency is realistic. No generic presentation, but a concrete plan for your company.