Most companies look for certifications, reference lists, and technology expertise when choosing an AI partner. That's understandable, but it's the wrong direction.

What actually determines success or failure: can this partner translate your company's implicit knowledge into working AI systems? Does it understand how your employees actually work, where the real bottleneck lies, what's buried in your documents that nobody has written down?

That capability can be tested. There are clear criteria that non-technical people can apply, and there are red flags you can spot early.

Test results, not promises

The first and most important test is simple: show me what you've built.

A serious AI partner shows working software. Videos of real systems in production. Test links you can try out yourself. Live demos with real data. Seeing is believing, and anyone who can't deliver that probably shouldn't.

Many providers instead present architecture diagrams, technology stacks, and slide decks about AI trends. That's not a sign of substance -- it's a sign of missing real projects. The question "Can I test the system today?" is the most direct path to the truth.

This isn't about seeing perfect systems. Experienced teams also show unfinished versions, explain what doesn't work yet, and reason through why they made certain decisions. That's a quality signal, not a sign of weakness.

What a good partner does that you don't expect

Honest advice sometimes means: we're not the right choice.

An experienced AI partner will in some situations recommend off-the-shelf software instead of a custom project. They'll advise against projects when the prerequisites aren't met. They'll say when a problem is better solved through a better process than through AI.

That sounds like a bad business model. In practice, it's the opposite: it's the hallmark of providers with enough experience to forgo short-term revenue.

The question to ask: "What would you advise me against?" Anyone who can't answer that question or immediately falls back on standard responses is probably thinking more about their invoice than your success.

Three criteria you can test in the first meeting

Iterative approach instead of back-room development

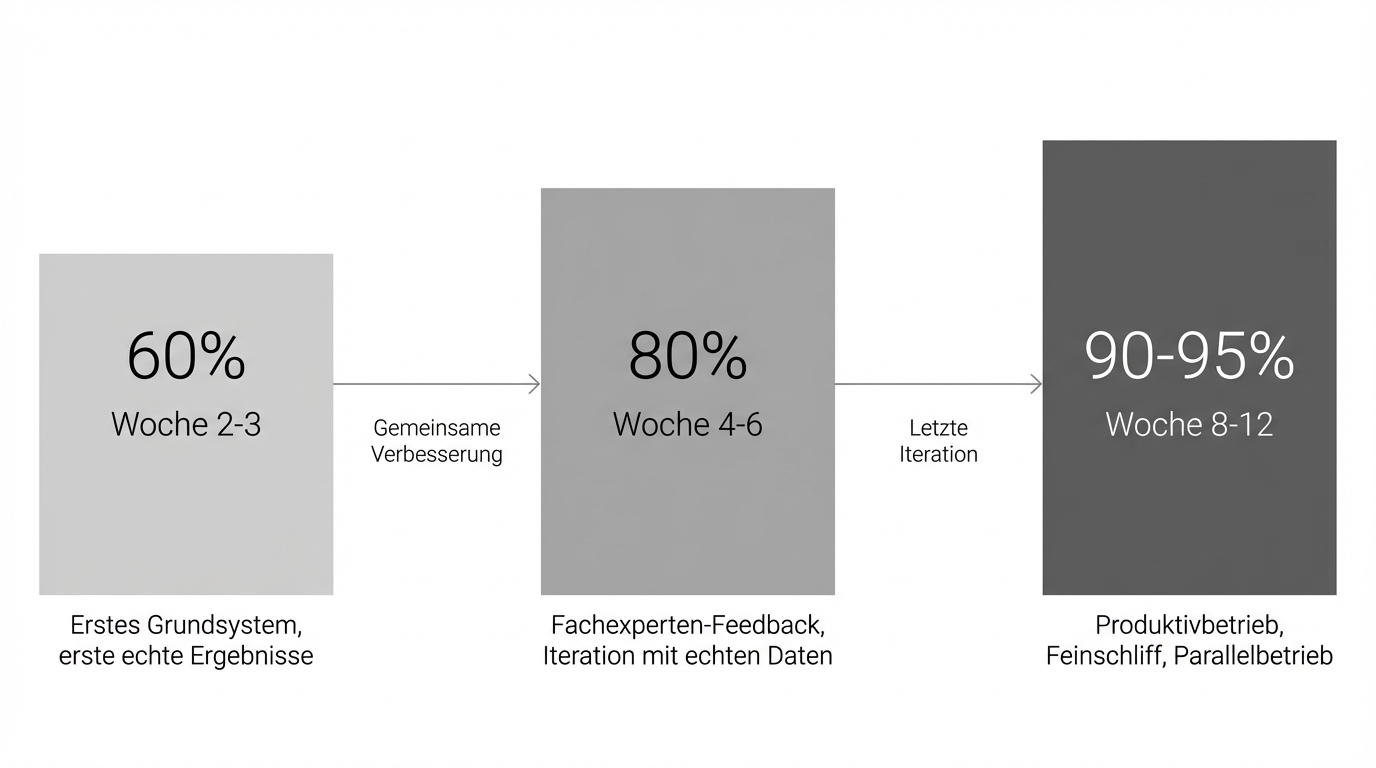

AI projects work iteratively. The right partner delivers a first working version after a few weeks that is then improved together. No months-long conception phase, no promise of perfection at project end.

As a benchmark from multiple projects: a working base system after about two weeks is realistic. At that point, result quality typically sits around 60 percent of the target state. By the time the system goes into production after three to four months, it rises to 90 to 95 percent. This path of continuous improvement protects against costly misdevelopments.

Ask concretely: "When will I see the first result?" Anyone who estimates more than four weeks until the first demo is doing traditional IT development, not AI development.

Source transparency as a foundation

Every answer an AI system gives must be traceable back to its sources. Not as a technical nicety, but as a core principle. Only then can an employee verify whether the AI's answer is correct. Only then do you build trust rather than presupposing blind faith.

If a provider doesn't treat this as a given but as an optional feature, that's a quality problem.

Protection against lock-in

Language models evolve fast. A system that uses GPT-4o today should be able to switch to a better model in a year without rebuilding the entire system.

That's only possible with a software layer that makes models interchangeable. A provider that hard-codes a single model or a single vendor is building in lock-in that you'll pay dearly for later.

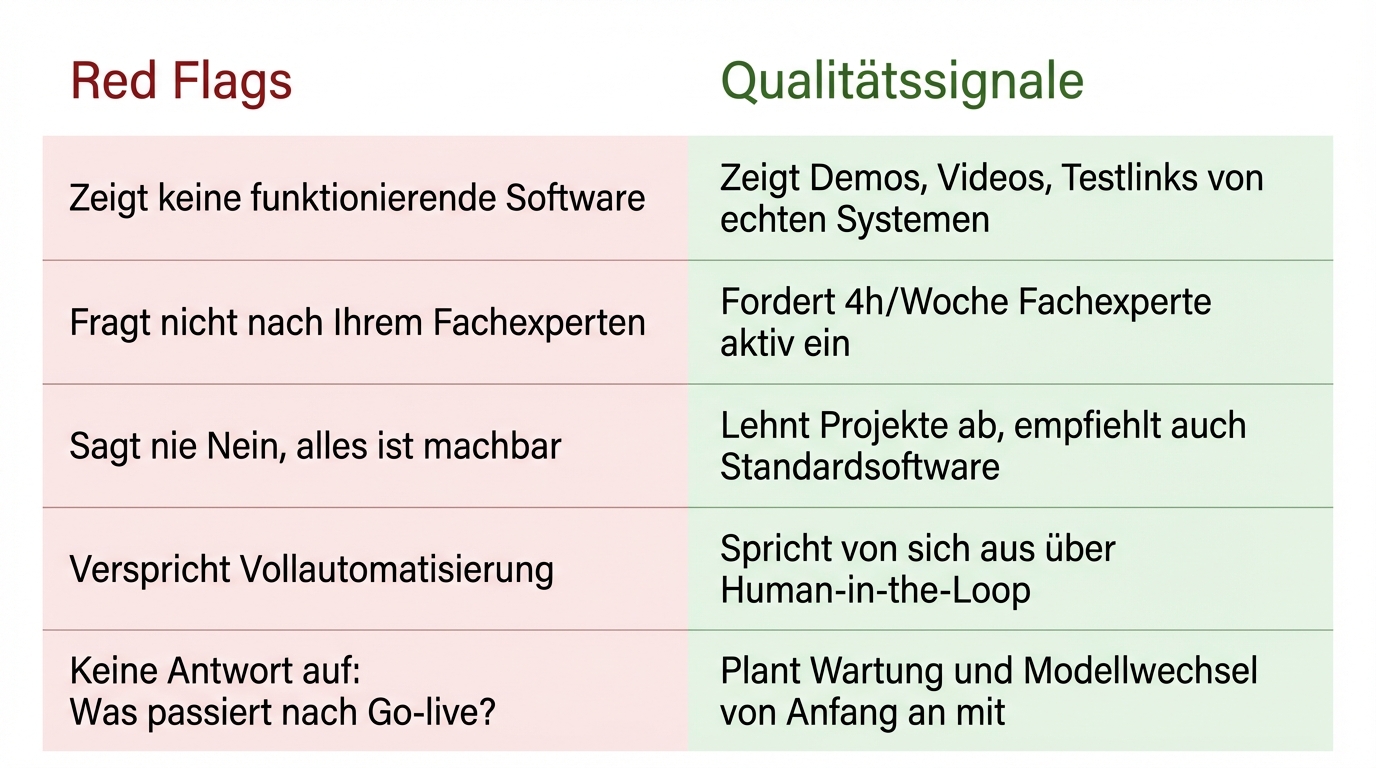

Five red flags that reveal a bad partner

-

They show nothing that works: Many providers present architecture diagrams, technology stacks, and slide decks. What they don't show: a system that runs today. When asked for a demo and offered a conception phase instead, they've probably never taken a project into production.

-

They don't ask about your domain expert: The most important resource for an AI project isn't the budget -- it's a person on the client side who truly knows the process. If a provider doesn't actively ask about this person and their availability in the first meeting, they're either planning to work without real domain knowledge or don't understand the dynamics of real projects.

-

They never say no: An experienced partner turns down projects where the prerequisites are missing. They recommend off-the-shelf software when it's the better fit. They name limitations. Anyone who nods at every use case and never flags risks is optimising for their revenue, not your success.

-

They promise full automation: Anyone who claims an AI system can fully take over processes without human review either underestimates the risks or doesn't know them. Serious providers bring up human in the loop on their own -- along with cases that shouldn't be automated and the limits of today's technology.

-

They have no answer to "What happens after go-live?": AI systems age differently from traditional software. Models get replaced, requirements change, sources need maintenance. If a provider doesn't raise the topic of maintenance and ongoing development in the first meeting, they're only thinking as far as project delivery.

What needs to be in place on your side

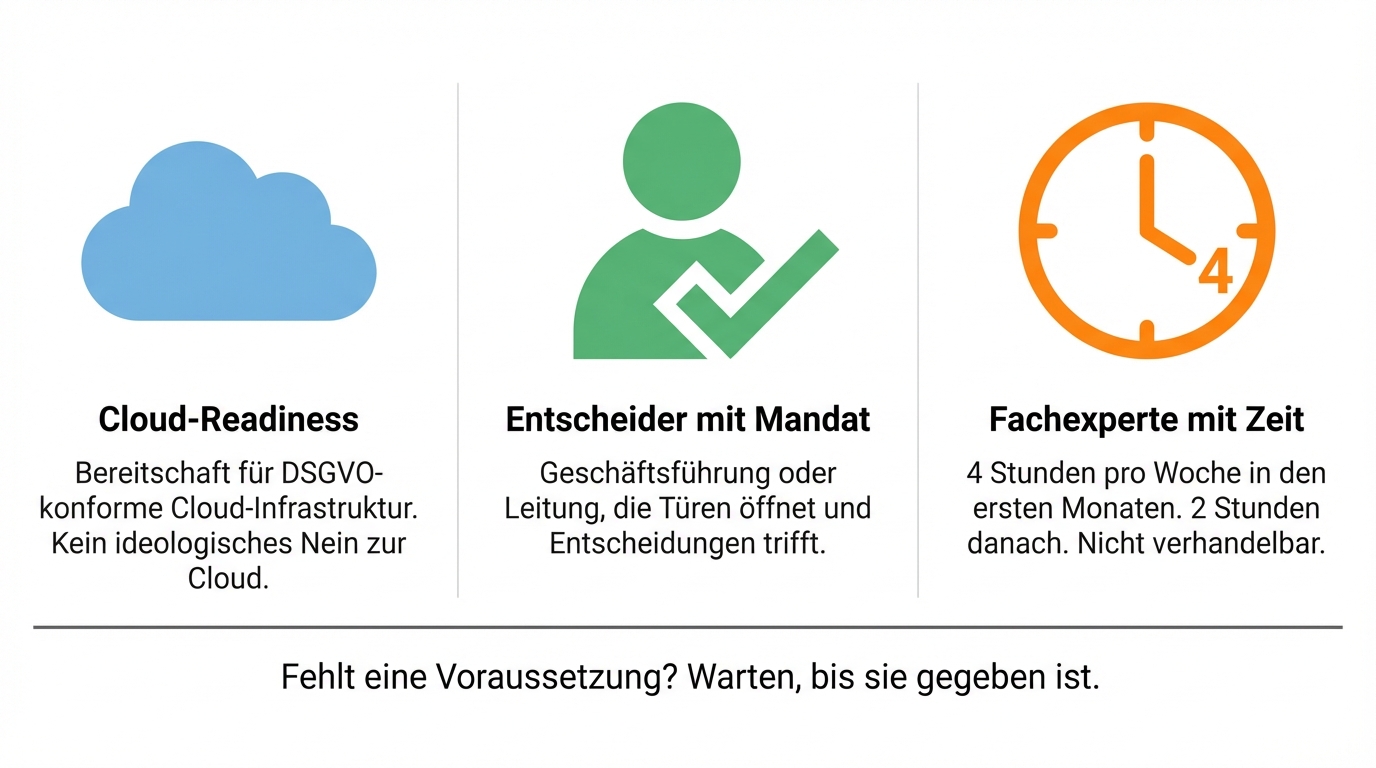

The best partner selection won't help if the fundamentals are missing on the client side. Three things are necessary:

-

Cloud readiness: At minimum, the willingness to work with GDPR-compliant cloud infrastructure. Companies that categorically reject any cloud solution significantly limit the quality of the outcome.

-

A decision-maker with a mandate: Someone at management or executive level who champions the project, makes decisions, and opens doors internally. AI projects need productive friction within the organisation, not insulation from it.

-

A domain expert with available time: The person who knows the process to be automated best must be regularly accessible. Two to four hours per week over three to four months is a more realistic requirement than many clients initially expect.

If any of these prerequisites is missing, the advice is: wait until they're in place. A mediocre project with good prerequisites delivers more than a good project with bad ones.

Data quality: no longer a dealbreaker

Many companies believe their data isn't good enough for AI. Documents in different formats, unstructured filing systems, incomplete metadata.

That used to be a serious limitation. Since mid-2025, it no longer is. Modern models process heterogeneous data sources directly, without extensive preprocessing. Quality problems in AI systems today almost always stem from the sources themselves, not from the AI: incorrect, outdated, or contradictory information in the original documents. That's a content problem, not a technical one.

The right question isn't: "Is our data good enough?" It's: "Is the information in our sources correct and up to date?"

What sets AI apart from traditional IT development

One aspect many decision-makers underestimate: AI systems age differently from traditional software.

A CRM system built today will still be essentially the same in two years. An AI system that's state of the art today will, in 18 months, run on models that are significantly more capable. That's not a problem -- it's an advantage, provided the system was built in a modular way.

In concrete terms, that means two things. First: the business case for AI is more dynamic than for traditional software. What looks like a two-year payback today may become profitable faster as models improve. Second: plan for maintenance costs. As a benchmark, roughly 15 to 20 percent of the original development cost per year for upkeep and further development.

What a low-risk entry point looks like

The most common mistake in AI projects isn't choosing the wrong partner -- it's choosing the wrong starting point.

Not: tackle the biggest or most strategically important problem. Instead: find the tightest bottleneck. The process where an employee spends an hour every day on a task that is 80 percent repetitive. That's where the ROI becomes visible fastest, the risk is lowest, and the learning effect for everyone involved is greatest.

A low-risk entry always runs in parallel: the AI system operates alongside the existing process, not instead of it. Only when the quality is convincing does the manual step get reduced. That makes the entry reversible at any time and builds a kind of trust that no promise can replace.

If that first step goes well, the organisation gains experience: with the partner, with the technology, with its own approach to AI. That's more valuable than any certification.

Who these considerations are especially relevant for

-

Companies with knowledge-intensive processes: Proposal creation, document processing, internal knowledge research, customer enquiries. Anywhere employees spend significant time reading, understanding, and summarising information.

-

Companies with high confidentiality requirements: With the right infrastructure and architecture, GDPR-compliant AI is feasible even for organisations with strict confidentiality obligations.

-

Companies that have had bad experiences with AI projects: Often the issue wasn't the technology but missing prerequisites or wrong expectations. A new project with the right starting point can turn out very differently.

What you don't need: a perfect data set, a large AI budget, an IT lead with AI experience. You need a clear bottleneck, a decision-maker with a mandate, and a partner who shows results rather than slides.

The next step

The best way to evaluate an AI partner is a concrete conversation about your concrete use case. No demo day, no introductory talk.

If you want to know whether your use case is the right starting point and what a realistic timeframe would look like: get in touch. We'll look at your process and give you an honest assessment, including the prerequisites you need to meet internally.