A request comes in. By email, through a contact form, by phone. "Need a spare part for our unit, model BX-400, please send a quote." The sales rep opens the request and immediately sees: the serial number is missing, the delivery address is unclear, and model BX-400 comes in three variants. Draft a follow-up question, send it, wait. Two days later, the answer arrives. In the meantime, the request has joined the queue.

This back-and-forth costs companies more than most of them measure. Not just the processing time per request, but also the interruptions, the waiting periods, and the cases where follow-up questions are simply forgotten.

AI can fundamentally change this process: the request is analysed the moment it arrives. Missing information is identified, a follow-up query is generated, and the customer receives it within minutes. While they are still at their desk, still in the context of their own request.

Why manual completeness checks don't work

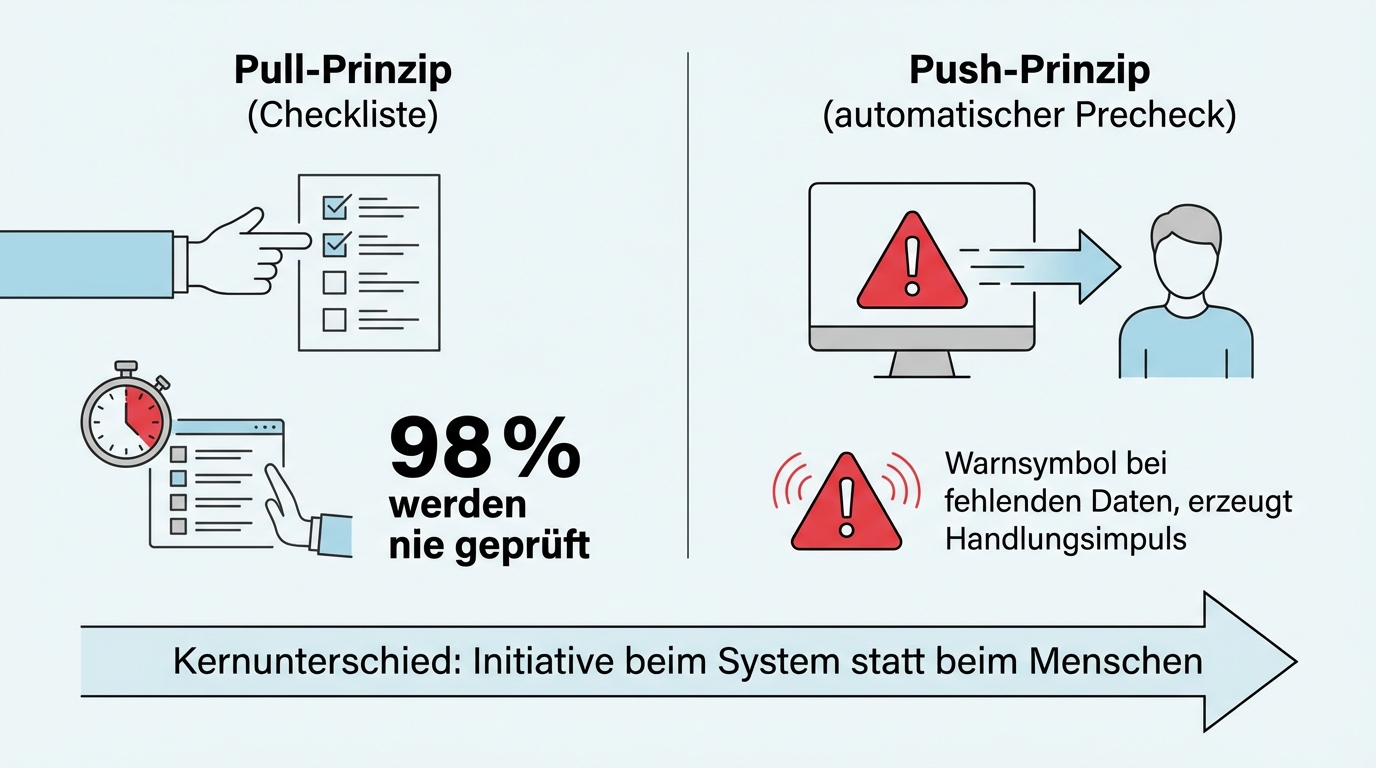

Almost every company has a form, a checklist, or a guideline for reviewing incoming requests. And almost everywhere, this review is not actually carried out in practice.

At one industrial company, a department head put it this way: "In 98 percent of cases, the form isn't filled out." Not out of negligence, but because under time pressure, the manual check is the first thing to go. At a partner firm, the same picture: "From my perspective it takes two minutes. But the team still doesn't do it."

The problem isn't employee discipline. The problem is the mechanism. Manual checks require the employee to take the initiative to perform a review. That works when you are fresh and alert. Under time pressure, the check gets pushed aside. An automatic pre-check works differently: it comes to the employee instead of waiting for the employee to come to it. A warning light that turns on when something is missing creates a sense of friction that motivates action. Ticking off a checklist item requires initiative.

Three minutes to a follow-up query: why speed changes everything

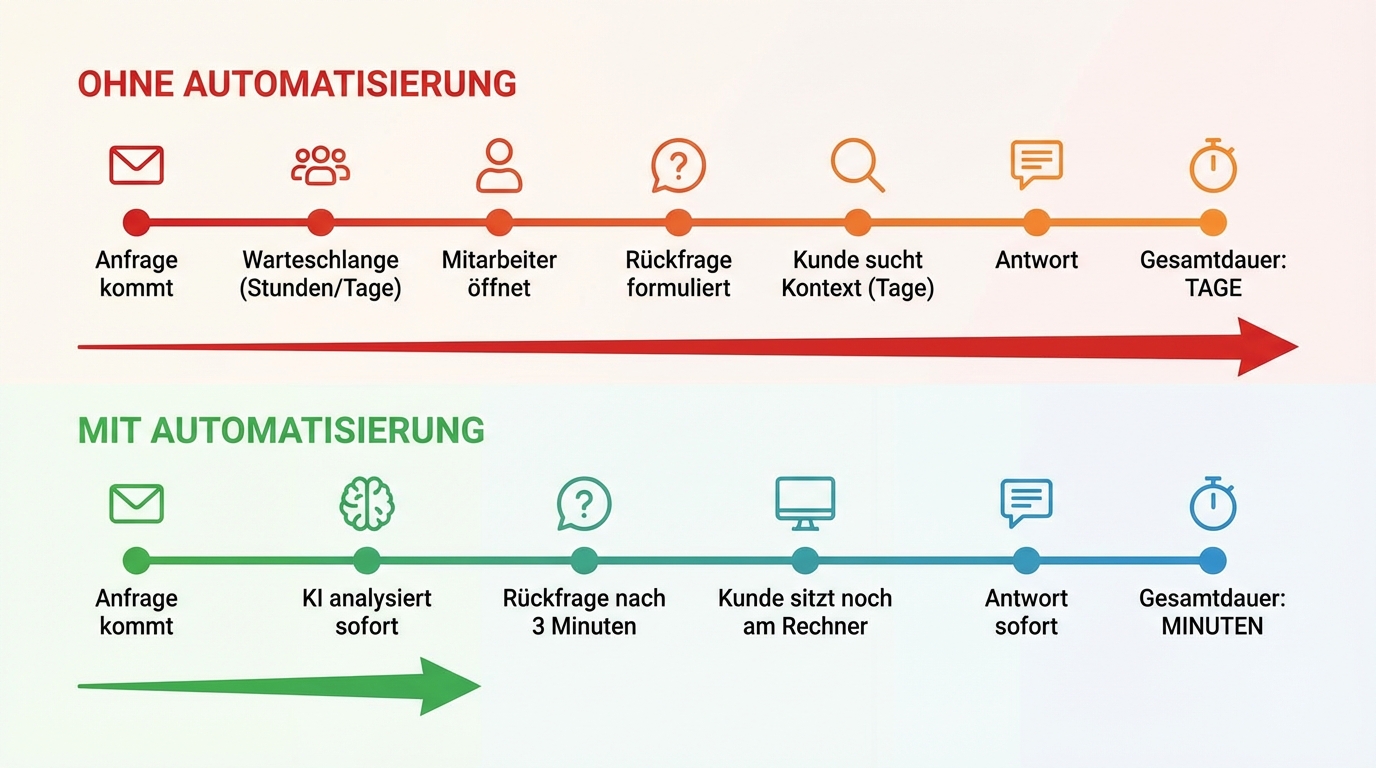

The decisive advantage of automatic follow-up queries is not completeness. It is speed.

When a customer submits their request, they are still at their desk. They are still in context, have their documents in front of them, know what it's about. If they receive a follow-up question within a few minutes, they can answer immediately. A quick look, add the serial number, confirm the delivery address. Done.

If the follow-up takes two days instead, the customer first has to find their case again, remember what they requested, dig out the documents again. Both sides lose time and attention. And often, the follow-up gets buried in the inbox.

The vision that has proven realistic across multiple projects: an incomplete request comes in, AI analyses it, three minutes later the follow-up query goes out. By the time the responsible employee opens the request for the first time, it is already complete.

Not just asking, but self-retrieving

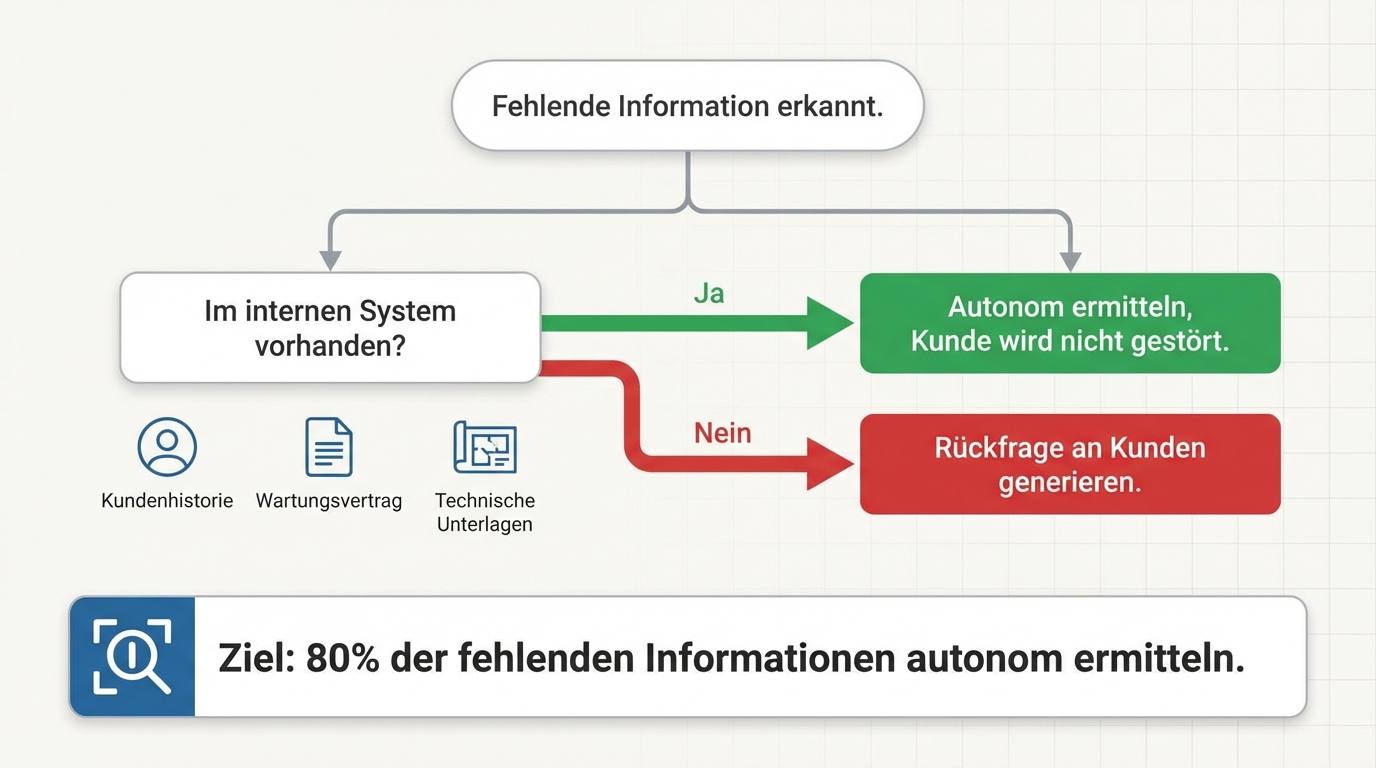

Automatic follow-up queries are the obvious approach. But the better approach is often: don't ask the customer for the missing information at all -- retrieve it yourself.

A customer sends a request without a serial number. In many cases, AI can determine the serial number from the customer history, the maintenance contract, or the technical documentation on its own. When that works, the customer doesn't need to be bothered at all.

In practice, "asking the customer for missing information" and "retrieving missing information yourself" are the same principle: it's about obtaining missing data, only the method differs. When AI has access to internal systems, it chooses the faster path. When the information can only come from the customer, it generates the follow-up query.

In one project, the goal was to determine 80 percent of missing information autonomously, without any follow-up to the customer. That sounds ambitious, but is realistic when the data already exists in the available systems and simply hasn't been connected.

The domain expert defines the rules in natural language

How does the AI know what's missing from a request? Not from a rigid rule set written by a programmer. But from instructions formulated by the domain expert themselves.

"When a customer sends a spare parts request, we need: serial number or unit type, desired delivery deadline, and delivery address. If the unit type is BX-400, additionally the year of manufacture, because there are three variants."

These instructions are written in natural language, not in code. The domain expert maintains them. When a new request category appears that keeps leading to follow-up queries, they add another rule. No IT ticket, no developer, no deployment. The change takes effect on the next request.

This approach has proven more robust than classic form logic because it can handle ambiguity. "We need an air conditioning system for our new warehouse" is not a form input. But an agent instructed to ask about floor area, ceiling height, and usage type for climate control requests can ask the right follow-up questions.

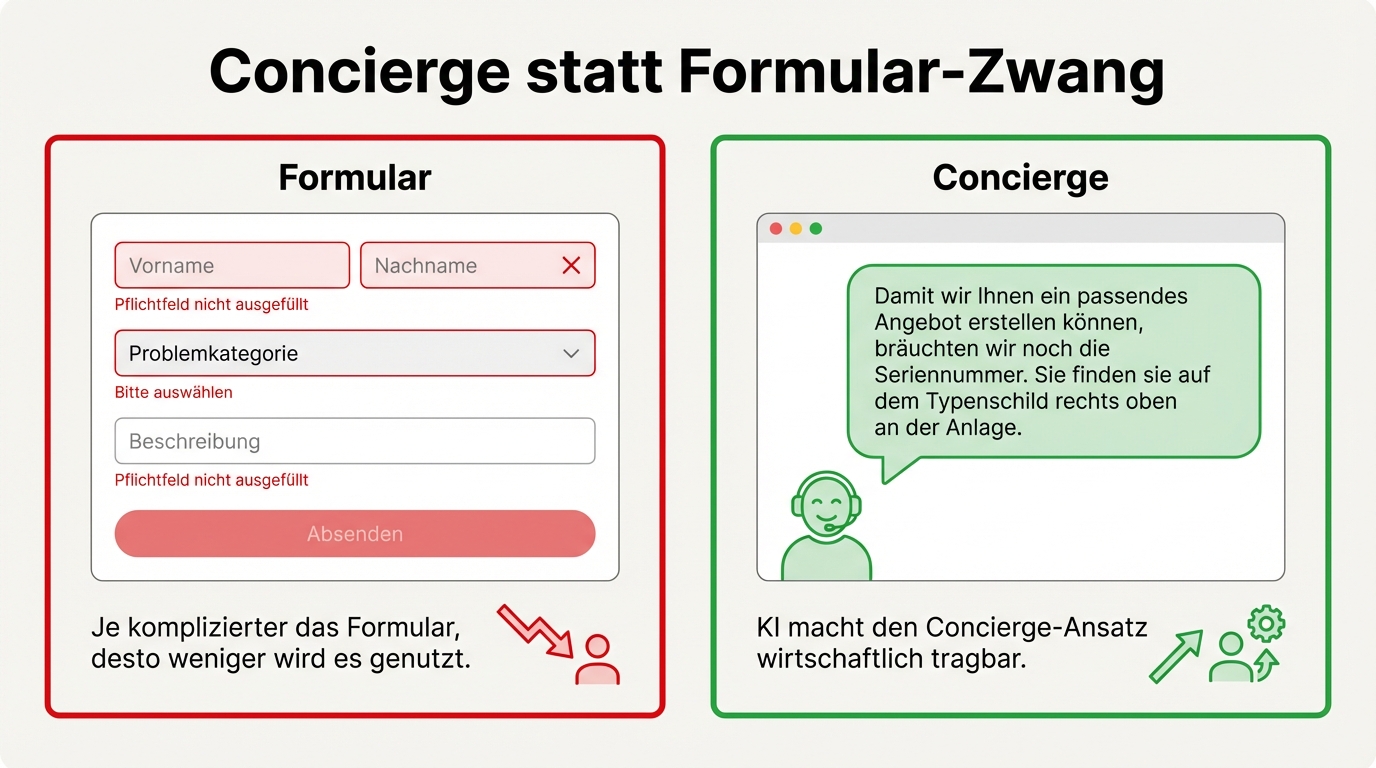

Concierge instead of forced forms

Many companies have tried to solve the problem of incomplete requests with better forms. More required fields, more detailed dropdown lists, mandatory inputs. Experience shows: the more complicated the form, the less it gets used.

The better approach is different: don't force the customer into a form, but meet them where they are. The AI acts like a concierge who helps the customer complete their request in a friendly manner. Instead of "required field not filled in", the customer gets: "So we can prepare a matching quote for you, we would also need the serial number. You can find it on the nameplate in the upper right of the unit."

This concierge service was previously not an option for cost reasons. An employee who reads every incoming request, identifies the missing information, and drafts a friendly follow-up query costs significantly more than AI doing the same in seconds. AI makes the concierge approach economically viable.

The quality question: imperfect beats nonexistent

What happens when the AI misses a piece of missing information? When it waves through a request as complete, even though something is missing?

The honest answer: that will happen. No system is perfect. But the benchmark matters. In companies where the manual completeness check doesn't happen in 98 percent of cases, even an imperfect automated system is a massive improvement. If the AI catches 95 percent of missing information and overlooks five percent, it has still found more than the status quo, where nobody checks at all.

Quality can be measured and improved. Systematic monitoring shows which request types the AI checks reliably and where it has weaknesses. Those weaknesses are addressed specifically by the domain expert adding new rules. Over time, the system keeps getting better, because every identified error triggers a correction that applies to all future cases.

Repeat customers need special context

A regular customer who has been ordering the same thing for years sends a brief email: "Same order as usual, but 300 instead of 200 units this time." An experienced employee immediately knows what's meant. AI without context information would ask: "Which product do you mean? Which specification?"

The best solution: before going live, go through the most important regular customers and load the context into the system. Which products does this customer order regularly? What special conditions apply? What implicit agreements exist?

That sounds like effort, but has a valuable side effect: knowledge that previously existed only in the heads of individual sales reps is documented for the first time. When the account manager goes on holiday or leaves the company, the knowledge isn't lost -- it's in the system.

Completeness checking as the first step

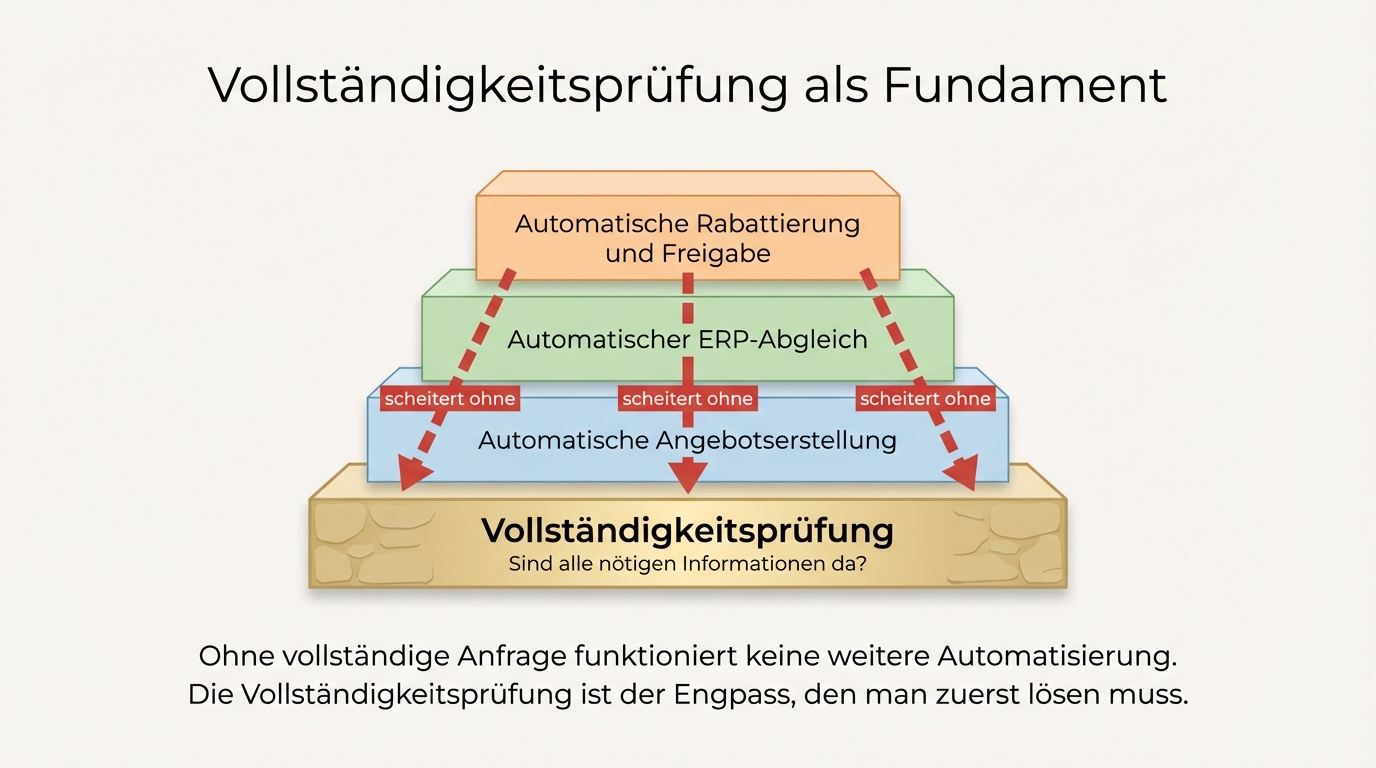

Companies thinking about AI automation are best served by starting with completeness checking. Not because it's the most spectacular use case, but because it lays the foundation for everything that follows.

Without a complete request, no automatic quote generation. Without structured data, no automatic ERP reconciliation. Without clear customer identification, no automatic discount. The completeness check is the bottleneck where every subsequent automation fails if it isn't solved.

Getting started is comparatively simple: select one request type, go through the required information with the domain expert, formulate the rules, and test the system on a sample. Results become visible quickly, because the improvement over the status quo (no checking at all) is so striking.