A consulting firm writes hundreds of proposals per year. Each one starts the same way: open the template, find an old proposal to a similar client, copy the relevant passages, adapt, rephrase, insert company details, check the formatting. Four to eight hours per proposal, significantly more for complex tenders. Most of that time doesn't go into thinking -- it goes into assembling and adapting text blocks that already exist in some form.

AI changes this process. Not by inventing proposals out of thin air, but by doing what the human does too: finding existing content, putting it into the right structure, and adapting it to the new context. The difference: AI does it in minutes instead of hours, and it doesn't forget a single reference document.

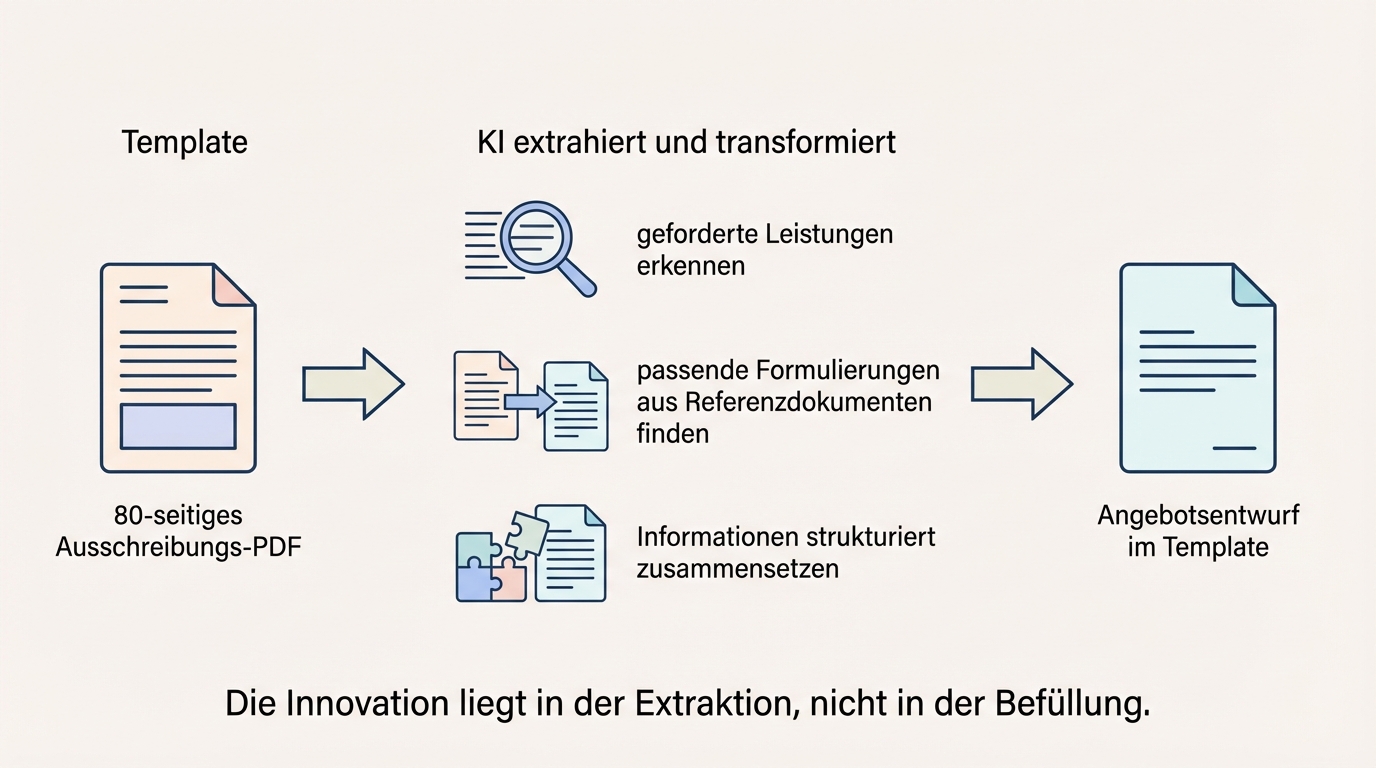

The innovation is in extraction, not in filling

Template filling has existed for decades. Mail merge functions have been inserting customer names and addresses into templates since word processing began. There's nothing new about that.

What is new: AI can read unstructured sources, extract the relevant content, and transform it so that it fits into a structured document. A tender arrives as an 80-page PDF. The AI reads it, identifies the required services, recognises the technical requirements, and maps them to the correct sections of the proposal template. In addition, it searches older proposals for matching phrasing and service descriptions. The result: a proposal draft that isn't generated, but intelligently assembled.

This reframing matters because it sets the right expectations. The AI doesn't hallucinate service descriptions. It takes what's in the reference documents and adapts it. The quality of the output depends directly on the quality of the input.

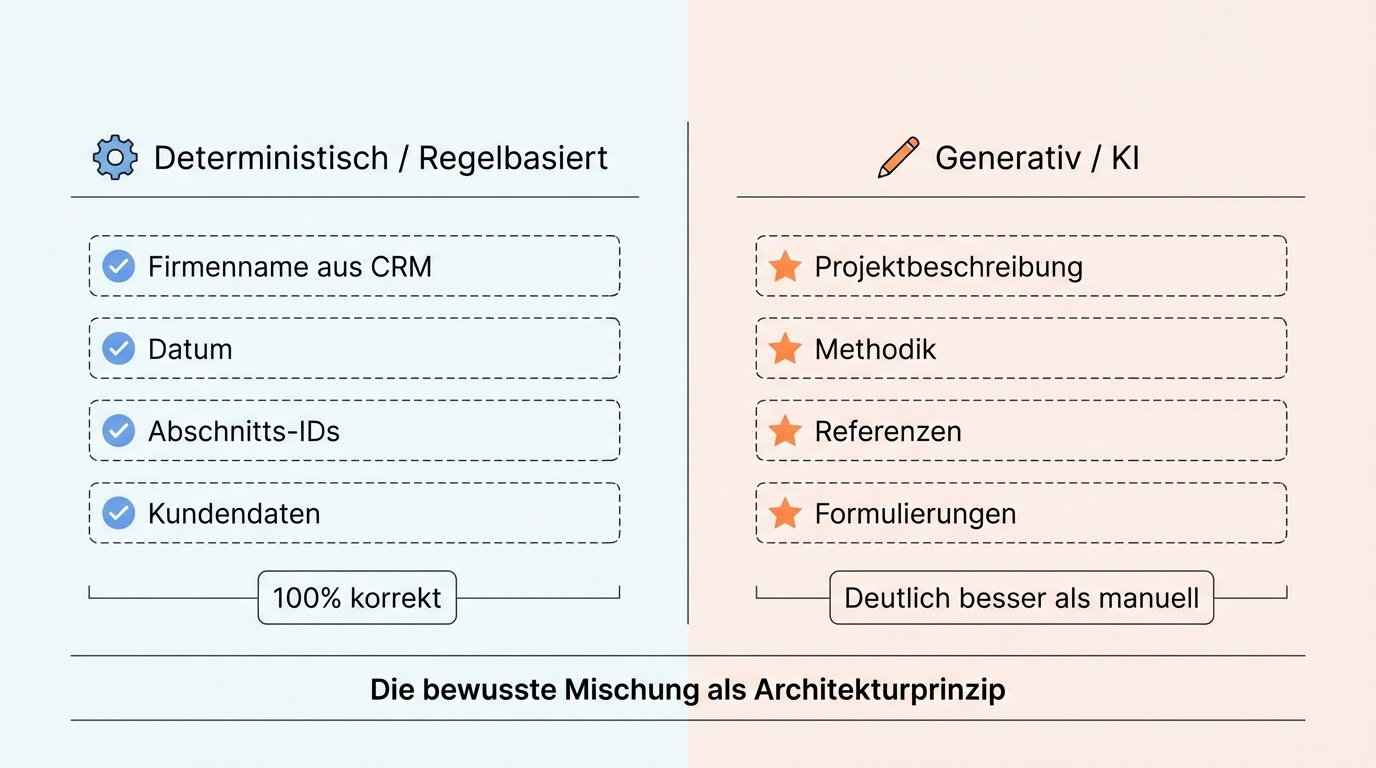

Deterministic fields and generative fields: the right mix decides

A reliable document generation system doesn't treat every field the same. The company name comes from the CRM -- deterministic, rule-based. No AI needed there. The project references come from the archive, selected and adapted to the current tender. That's where AI is needed.

The art lies in choosing the right method for each field in the template. A concrete example: when extracting section IDs from a tender, a rule-based method is more reliable than a language model. Because with long alphanumeric codes, even a good model can get a digit wrong in about half a percent of cases. For body text, on the other hand -- project descriptions and methodology sections -- the language model is clearly superior.

In a project where a single template contained over 100 variable fields, this distinction became an architectural principle. Some fields are filled rule-based (title, date, client data), others generatively (project description, methodology, references). The deliberate mix of both makes the difference between a system that works reliably and one that produces surprising results.

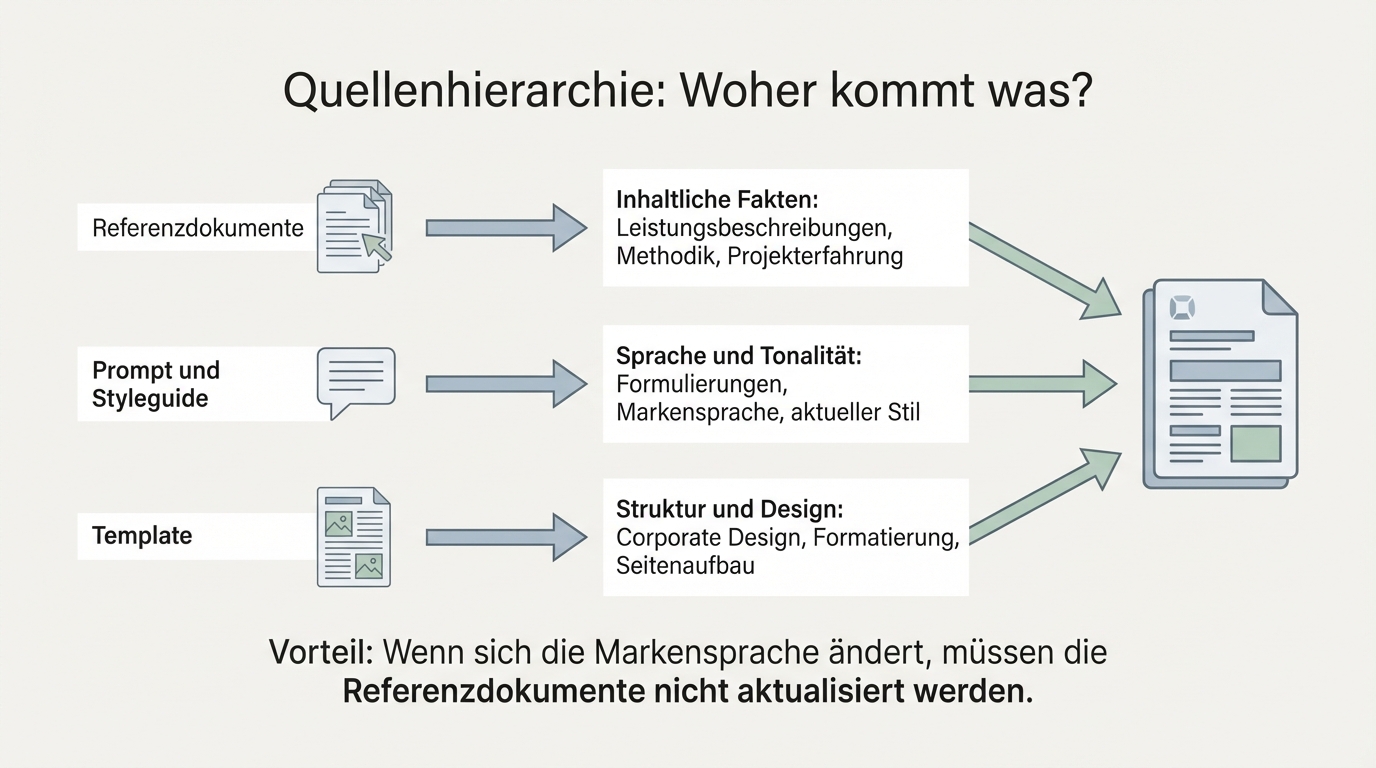

Reference documents are a blessing and a curse

The strongest source for a good proposal is the last good proposal to a similar client. Experienced sales reps know this and regularly copy from their best templates. AI does the same, only more systematically.

But reference documents bring a subtle problem: their language infects the AI's language. If an old proposal contains poor phrasing or outdated terminology, those resurface in the new proposal. The AI doesn't automatically distinguish between what is substantively valuable and what is linguistically outdated.

The solution is a source hierarchy. For each section in the template, you control which source dominates: reference documents provide the factual content (how was the service delivered, what methodology was used), language and tone come from the prompt or a style guide. Brand elements come from the template itself.

This separation has a practical advantage: when brand language changes, the reference documents don't need updating. The system continues to draw factual content from old proposals but uses the new language from the updated prompt.

70 percent quality from the first draft

Sending an AI-generated proposal to a client unchecked would be reckless. That's not the goal either. The goal is a draft that's good enough that the human has significantly less work than writing from scratch.

In practice, generated documents typically land at around 70 percent quality. That sounds low, but it's an enormous lever: 70 percent means the basic structure is in place, most of the content is correctly positioned, and the domain expert only needs to refine specific areas. Instead of filling a blank document, they work from a solid draft.

The remaining 30 percent are the parts that need human judgement: phrasing that has to fit the specific client, substantive nuances that only the domain expert knows, or sections where no adequate reference existed. That work stays with the human, but the total effort drops substantially.

Don't automate everything: the deliberate boundary at 85 percent

Every company has edge cases. Proposals that need to pass through multiple departments, require sign-off from the executive team, or are so unique that no reference document helps.

Deliberately excluding these cases isn't failure -- it's a strategic decision. Experience shows that roughly 85 percent of documents are suitable for AI-assisted generation. The remaining 15 percent are too complex, too risky, or too unique. Leaving those manual and investing the energy into the quality of the automated 85 percent is the economically smarter path.

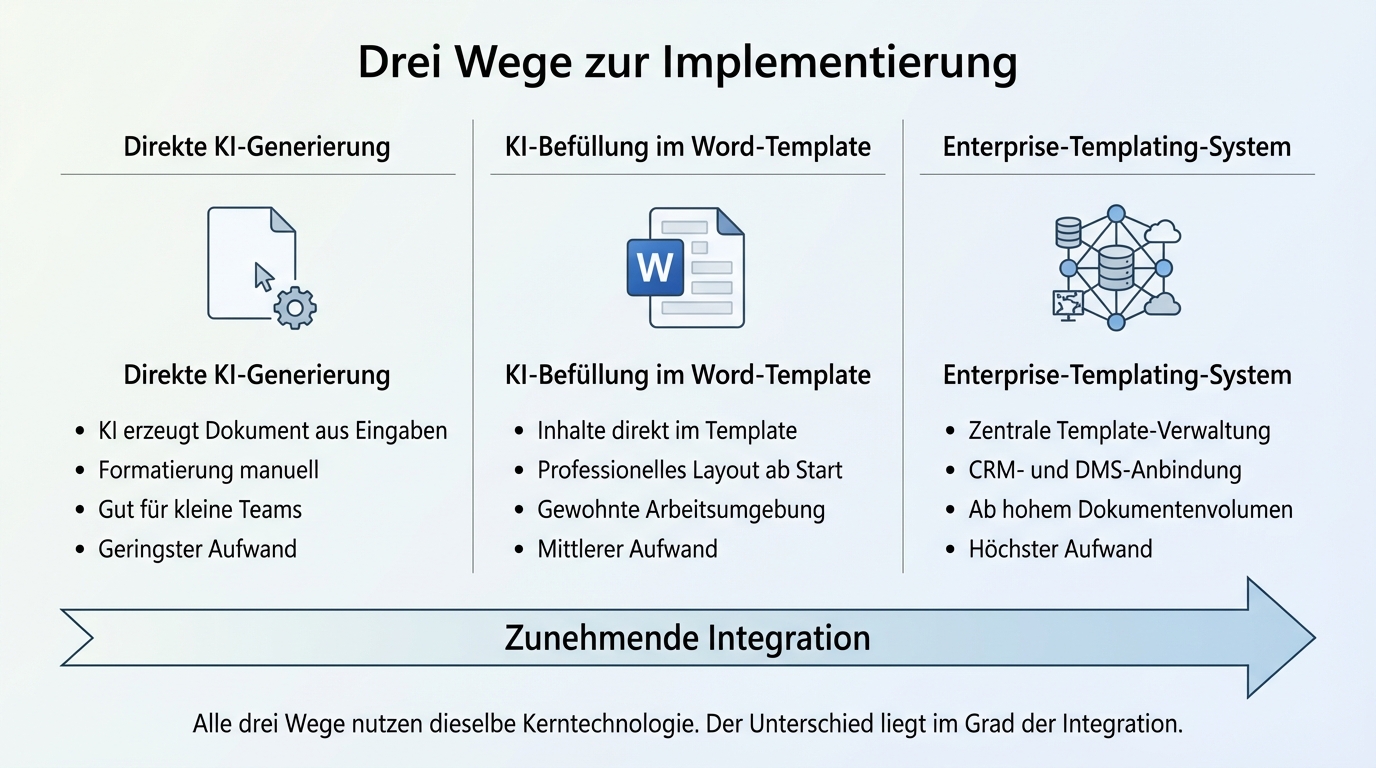

Three paths to implementation

Not every company needs the same solution. Implementation complexity depends on document volume and existing systems.

Direct AI generation: The AI produces a document based on inputs and references. Simple to implement, well suited for smaller teams with few document types. The content is there; formatting happens manually afterwards.

AI filling into a Word template: The AI generates content directly into an existing Word template. The document looks professional from the start because corporate design and layout structure are baked into the template. The user works in their familiar environment and sees the fully populated document directly in Word.

Enterprise templating system: For companies with high document volume, a specialised templating system is connected that manages templates centrally and is coupled with CRM, document management, and proposal platforms. Pays off at a scale where central template management justifies the additional effort.

All three paths use the same core technology. The difference lies in the degree of integration, not in the quality of the generated content.

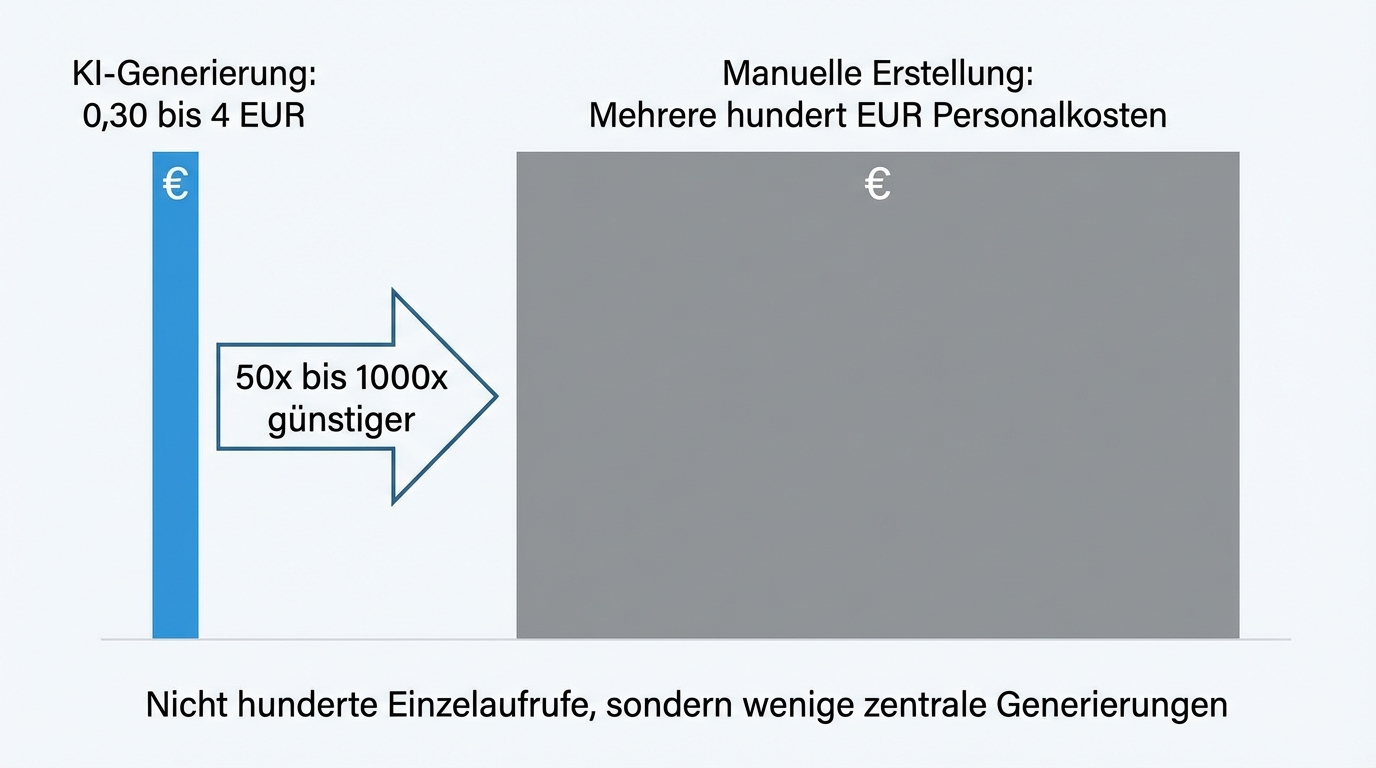

What it costs: 30 cents to 4 euros per document

Operating costs are lower than most expect. A document with many variable fields looks at first glance like it would require hundreds of AI calls. In practice, a few central generation steps are triggered upfront, and the individual fields are filled from those results.

Empirically measured, actual costs range from 30 cents to 4 euros per document depending on document length and source complexity. Against that stands a time saving that, for complex proposals, amounts to several hundred euros in personnel cost per case. The cost-to-benefit ratio is so clear that optimising operating costs hasn't been necessary in any project so far.

Quality improves with use

Every generated document delivers feedback. Which phrases were kept? Which were adjusted? Where did the employee rewrite completely? This feedback flows into improving the prompts and the source hierarchy.

In one project, the quality of generated proposals improved over the course of a few months to the point where the system became known across the entire company as a success story for AI adoption. The key wasn't a single technical breakthrough, but consistent collaboration between domain experts and the AI system. Every correction made the next document better.

A prompt should require only one domain expert. When multiple domains are involved, prompts are scoped so that each expert can maintain their area independently. This prevents coordination overhead and contradictions between different knowledge holders.